Why AI Websites Fail to Get Traffic or Sales

Quick answer

AI-built websites often fail after launch not because the content is bad, but because the system behind it cannot evaluate, reinforce, or correct itself. Pages may index and briefly rank, but without intent control, feedback signals, and distribution logic, performance quietly stalls. Automation increases output, not outcomes.

Real Reason

AI website builders promise speed, simplicity, and fast results. You enter a keyword, answer a few prompts, and within minutes you have a site that looks professional, loads quickly, and contains dozens of pages.

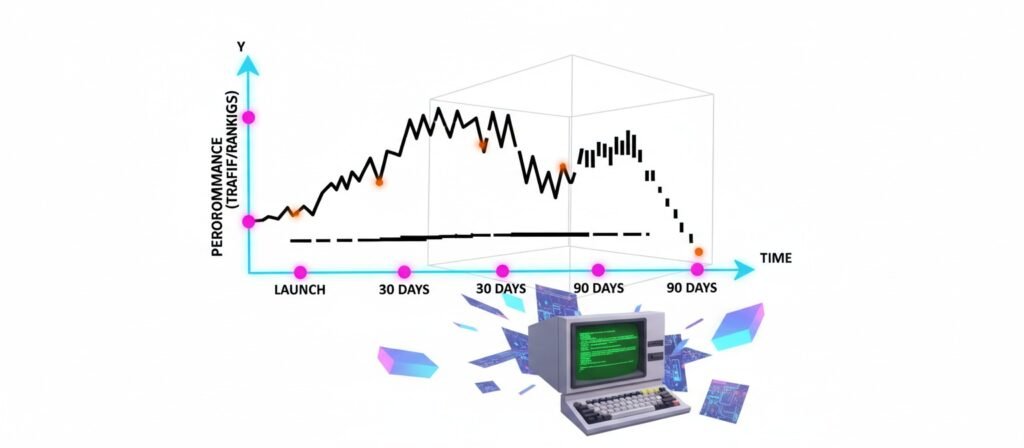

At first glance, nothing appears broken. Many of these sites are indexed. Some receive early impressions. A few even rank briefly for low-competition terms. Then progress stops. Traffic stays flat. Engagement remains weak. Sales or leads never materialize.

This failure is often blamed on vague explanations like “AI content is generic” or “AI lacks a human touch.” While those ideas sound reasonable, they do not explain what actually goes wrong in real systems.

Most AI websites fail for a simpler reason.

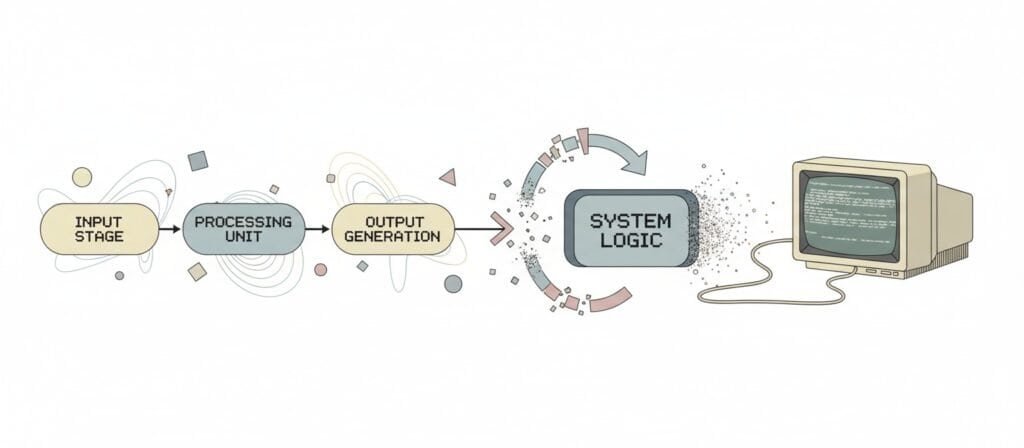

They are launched without a system that can observe performance, learn from it, and adjust decisions over time.

You can see a deeper breakdown of signal stagnation in Why AI Blogs Get Stuck at Zero Impressions

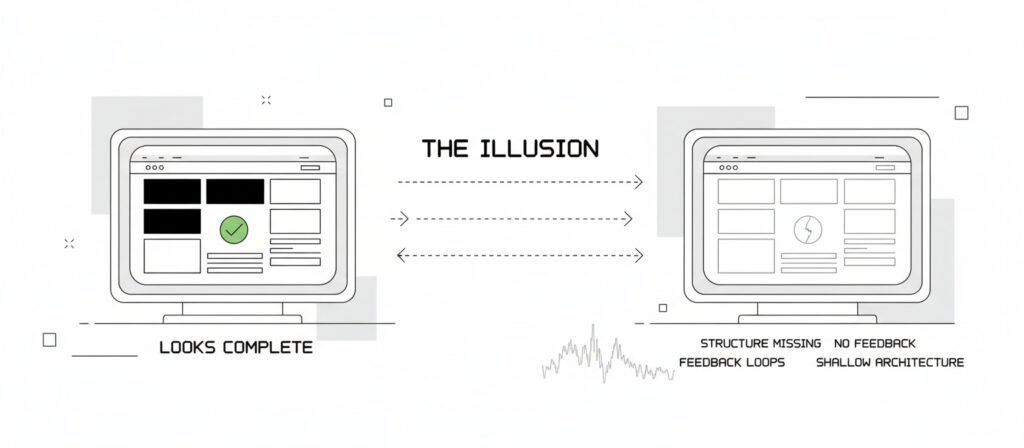

The false expectation that kills most AI websites

Most AI websites are built around a silent assumption:

If a site looks complete and content is published, traffic and results will follow.

That assumption comes from an older version of the web, where publishing alone could create visibility. That model no longer exists. Search engines do not reward presence. They reward signals.

Those signals are created through prioritization, reinforcement, feedback, and correction over time. AI builders accelerate creation, but they do not automatically create those mechanisms.

As a result, many AI websites launch “finished” but remain functionally inert.

Why content that looks fine still doesn’t perform

When an AI website fails, confusion usually follows. The content reads well. The grammar is clean. The pages are structured logically.

- From a human readability perspective, nothing seems wrong.

- From a performance perspective, everything is.

- Readable content is not the same as relevant content.

Relevance depends on how precisely a page matches user intent, how clearly it signals topical focus, and how well it differentiates itself from competing pages.

AI-generated content often averages across patterns. It produces text that sounds acceptable to many audiences but feels deeply relevant to none.

In practice, this shows up as

- Pages that rank briefly and then disappear

- Impressions without clicks

- Rankings for loosely related queries

- Multiple pages competing with each other for the same intent

Search engines test the content, detect weak engagement and internal overlap, and then quietly deprioritize it.

This mismatch between readability and visibility is explored further in How AI Content Automation Actually Works

Automation without distribution is just publishing faster

Many AI website failures are framed as SEO problems, but SEO is rarely the first point of failure.

The earlier failure is distribution.

Publishing content does not guarantee discovery. New pages are treated as unproven and must earn reinforcement signals before their visibility expands.

Most AI systems stop at publication. They generate pages, but they do not manage:

- How content earns its first visibility signals

- How those signals are reinforced

- How weak pages are identified and corrected

Distribution is not social posting. Distribution is signal reinforcement.

Without reinforcement, content is tested briefly and then deprioritized, no matter how fast it was produced. The system creates volume without momentum.

A related structural failure occurs when indexed pages never receive testing signals, discussed in AI Content Sites Getting No Index After Publishing

Why most AI websites plateau after 20 to 40 pages

A common pattern across AI-generated sites is early activity followed by a flat line. Pages index. A few impressions appear. Growth stalls. This happens because automation creates output, not prioritization.

Most AI systems expand horizontally, covering many topics shallowly instead of expanding vertically around what already shows promise. Once a site reaches a certain size, additional pages dilute topical focus rather than strengthen it.

Without a mechanism to decide what deserves expansion, what should be merged, and what should stop publishing, the site accumulates low-impact pages. More content does not always create more authority. Often, it creates noise.

This pattern aligns with broader automation decay behavior examined in Why AI Blogs Get Stuck at Zero Impressions

The black-box problem when failure can’t be diagnosed

Another overlooked issue with AI-built websites is loss of visibility into cause and effect.

When a manually built site underperforms, problems can usually be isolated. Intent mismatch, internal structure, or technical signals can be examined and corrected.

With heavily automated systems, decisions are often opaque. Content choices are made by the system, not the operator.

When performance drops, it becomes unclear whether the issue is

- Intent mismatch

- Content overlap

- Structural signaling

- Bias in automated generation

Problems are noticed late. Corrections are slow or impossible. Publishing continues in the hope that volume will compensate. Instead, the system compounds its own blind spots.

Why this gets worse over time, not better

A common misconception is that AI websites will eventually “learn” their way into success.

In practice, most failures worsen over time. As automated content accumulates without correction loops, weak signals multiply. The site becomes harder to reposition, not easier.

This is why many AI websites look promising in the first month and nearly invisible by the sixth.

The real problem is not AI; it’s missing system design

AI itself is not the problem.

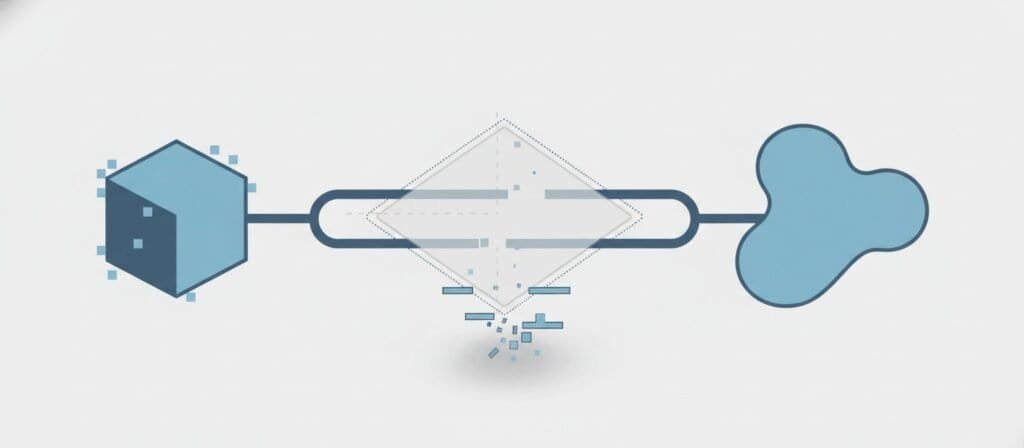

Automation becomes powerful only when it operates inside a system that includes:

- Clear intent targeting

- Performance feedback

- Distribution logic

- Human oversight and correction

Without these components, automation accelerates output but not outcomes.

The difference between AI websites that fail and those that compound is not the tool used. It is whether automation is embedded inside a system that can observe, adapt, and correct itself.

Without that system, speed becomes a liability instead of an advantage.

What this page does not cover

To avoid confusion, this page does not cover:

- specific AI website builders or tools

- platform recommendations or comparisons

- how to rebuild your site step by step

- SEO tactics or growth hacks

Those topics are handled separately, where they belong.

For a deeper look at what happens after initial rankings disappear—when sites that once ranked quietly lose visibility—see our analysis of why AI websites stop ranking.

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn