Quick answer

AI content sites often stay unindexed not because Google cannot find them, but because Google does not accept them yet. The pages exist and may even be discovered by crawlers. However, the system does not see enough structure, intent, or trust to make them eligible for search results. This is a state, not a technical error.

What People Expect vs. What Really Happens

Most site owners expect a simple chain of events. They publish content, Google finds it, and then the pages appear in search results. This feels logical because publishing looks like the final step.

In practice, something else happens. The content gets published and Google may discover the URL, but the page does not enter the index right away. Instead, the system waits and evaluates the site as a whole.

So when pages stay unindexed, the site is usually not broken. It is paused in a waiting state where Google is still deciding whether the site is worth adding to the index.

The Indexing State Model

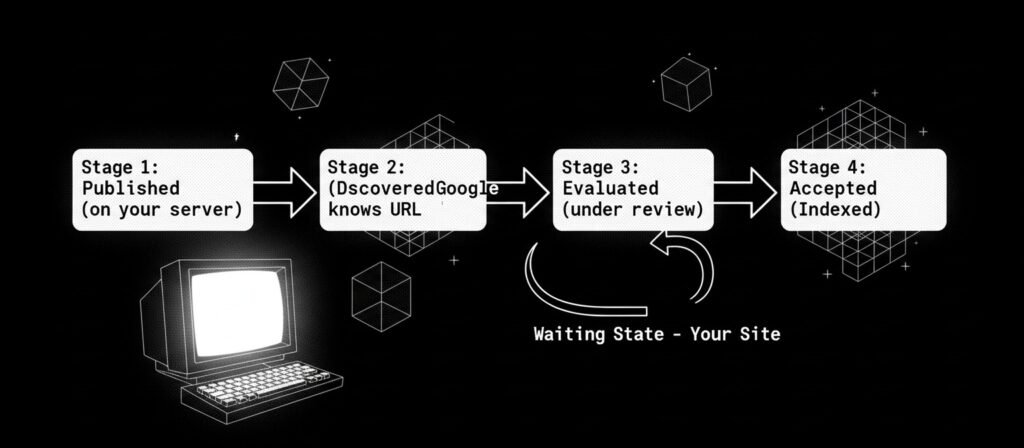

Indexing works more like a process than a switch. A page moves through stages before it becomes eligible to rank.

Stage | What it means | What the user usually thinks |

Published | The page exists on the site | “My content is live” |

Discovered | Google knows the URL exists | “Google saw my page” |

Evaluated | Google checks patterns and signals | “Something is wrong” |

Accepted (Indexed) | Page becomes eligible to rank | “Now I can get traffic” |

Most AI content sites stop between Discovered and Evaluated. Google can see the pages, but it does not yet trust the system that produced them. As a result, the pages remain outside the index.

Why AI Sites Enter This State More Often

AI tools remove friction from publishing. Because content becomes easy to produce, many sites grow fast before they show a clear structure or purpose. From a system point of view, this looks risky.

Several patterns appear again and again:

- Everything looks equal

When hundreds of pages appear at once, no page stands out as important. - Intent is unclear

Many pages cover similar topics using similar language, so their roles overlap. - Volume comes before identity

The site grows before it proves what it is about. - Discovery happens without endorsement

Google sees the URLs but does not approve them yet.

Automation increases output speed. However, it does not increase credibility at the same pace.

How This Feels To The Site Owner

From the user side, this state feels confusing and unfair. The site looks finished and professional, but nothing shows up in search results.

People usually notice signs like:

- Pages are live but invisible in search

- Search Console shows “discovered” or similar messages

- No clear technical errors appear

- Time passes with no change

Because there is no clear failure message, the situation feels silent. It appears broken even when the system is only undecided.

What This State Is NOT

This waiting state is not always caused by

- bad tools

- blocked crawling

- manual penalties

- broken code

Instead, it usually means one thing. The system has not confirmed that the site deserves a stable place in the index yet. The question is not whether the page exists. The question is whether the site looks intentional and trustworthy as a whole.

So the issue is about eligibility, not existence.

What this page does NOT cover

This page does not explain:

- how to force indexing

- which tools to use

- technical SEO steps

- content optimization methods

Those belong to deeper explanation or decision pages. This page only describes the condition and why it appears.

Key mental shift

A common belief is that publishing automatically leads to indexing. This belief comes from older web habits, where fewer pages competed for attention.

A more accurate belief is this:

Publishing = request

Indexing = acceptance

AI tools help you publish faster. They do not make Google trust the system that produced the content. When an AI content site stays unindexed, the problem is rarely the text alone. It is the way the whole site looks and behaves as a system.

Sites that remain unindexed never enter the testing phase. However, sites that get indexed and then lose visibility follow the ranking withdrawal pattern we’ve analyzed separately—a different failure mode entirely.

Related failure patterns

This indexing state often appears together with other failures, such as:

- Sites that decay after launch

- Blogs that get zero impressions

- Pages that look complete but produce no results

All of these point to the same sequence. Output happened before trust formed.

Next logical step

If you want to understand what Google evaluates during this waiting state, read:

Why AI websites fail after launch

and

Why AI blogs get stuck at zero impressions

These explain what happens after discovery, when automation runs without feedback or correction.

Frequently Asked Questions

Why does AI-generated content not get indexed by Google?

AI-generated content may stay unindexed because Google does not see enough structure or intent to accept it into the index. The issue is usually about trust and eligibility, not about the text itself.

How to get indexed by AI?

Indexing is not controlled by AI tools. It depends on how search engines evaluate the site as a system. Publishing content alone does not guarantee acceptance into the index.

Why are AI websites not working?

Many AI websites publish large amounts of content before showing a clear purpose or structure. As a result, they remain in a waiting state where search engines delay trust.

Why is my website not getting indexed?

A website may not get indexed when search engines cannot confirm that its pages deserve a stable place in results. This often happens when pages exist but their role is unclear.

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn