AI Content Feedback Loop In SEO

Quick Answer

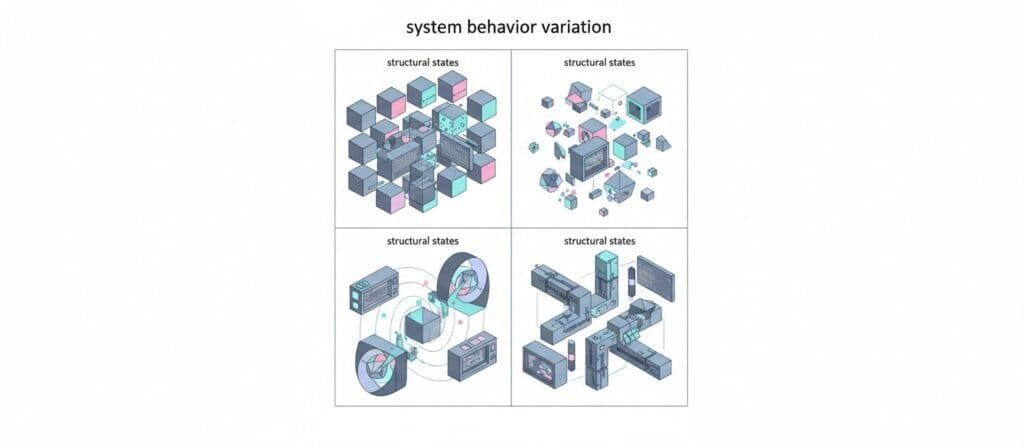

An AI Content Feedback Loop (SEO) describes how content changes over time based on signals coming from user interaction, search visibility behavior, and system interpretation of relevance. In practice, the loop is rarely a clean cycle. Signals are partial, delayed, and sometimes misleading. This means iteration alone does not guarantee improvement. Effective loops depend on signal clarity, structural alignment between pages, and interpretation discipline rather than speed or publishing volume. Understanding how these loops behave helps explain why automated content systems often plateau or fail to gain visibility.

Introduction

Most discussions about search performance treat iteration as progress. Publish content, observe metrics, adjust, repeat. The logic sounds reasonable. But real content ecosystems behave differently.

Search systems do not respond to intent in a linear way. Signals arrive with delay. Interpretation layers add uncertainty. Competing content shifts relevance boundaries. As a result, the AI Content Feedback Loop (SEO) is less predictable than commonly described.

You can see this tension in practical outcomes. Pages appear polished yet receive no exposure. Automation scales output yet visibility stalls. These patterns are explored in related research on Why AI Websites Fail After Launch, which examines system behavior after deployment rather than during production.

This article examines how feedback loops actually function inside content systems. The focus is on mechanisms, constraints, and structural interactions rather than tactical recommendations.

What Constitutes an AI Content Feedback Loop (SEO)

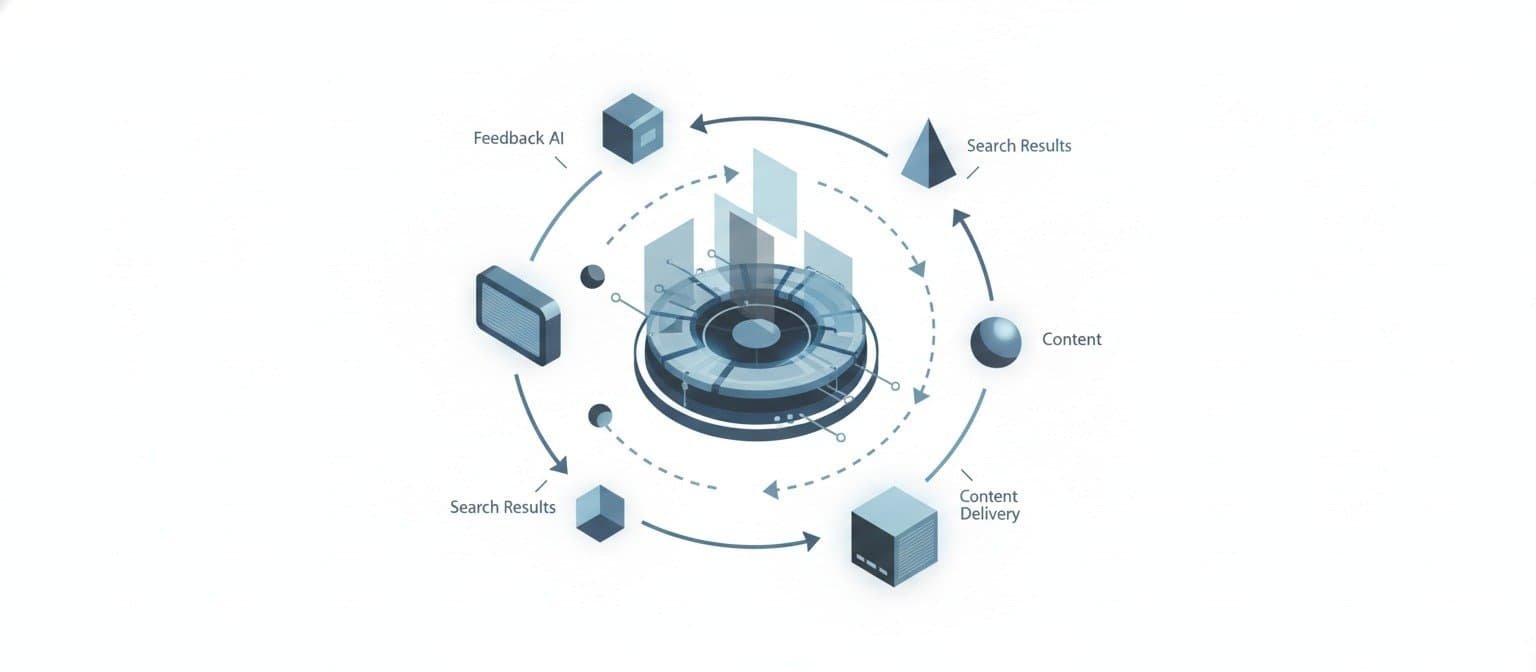

At a structural level, a feedback loop involves four stages:

- Content action occurs

- Signals emerge from user and system interaction

- Signals are interpreted

- Future content changes based on interpretation

Core signal categories

- Behavioral signals

- engagement patterns

- navigation flow

- interaction depth

- Structural signals

- internal linking relationships

- topical clustering

- hierarchy alignment

- System inferred signals

- relevance estimation

- semantic association

- context matching

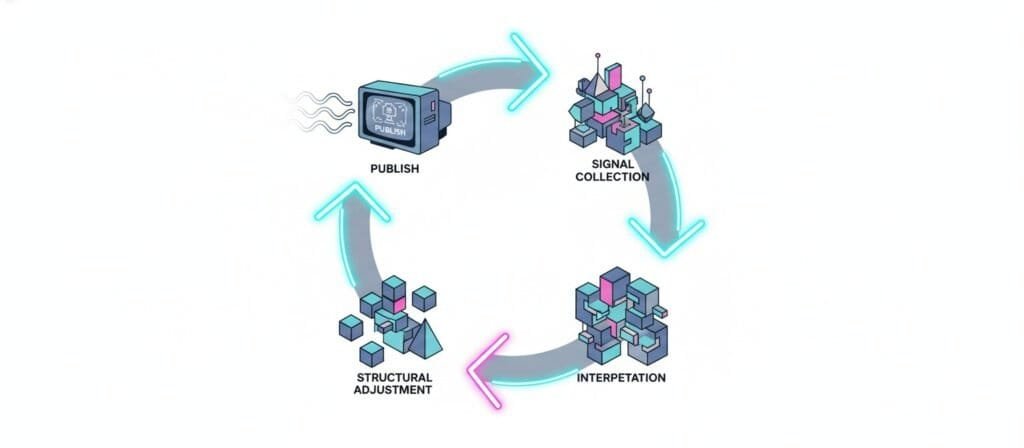

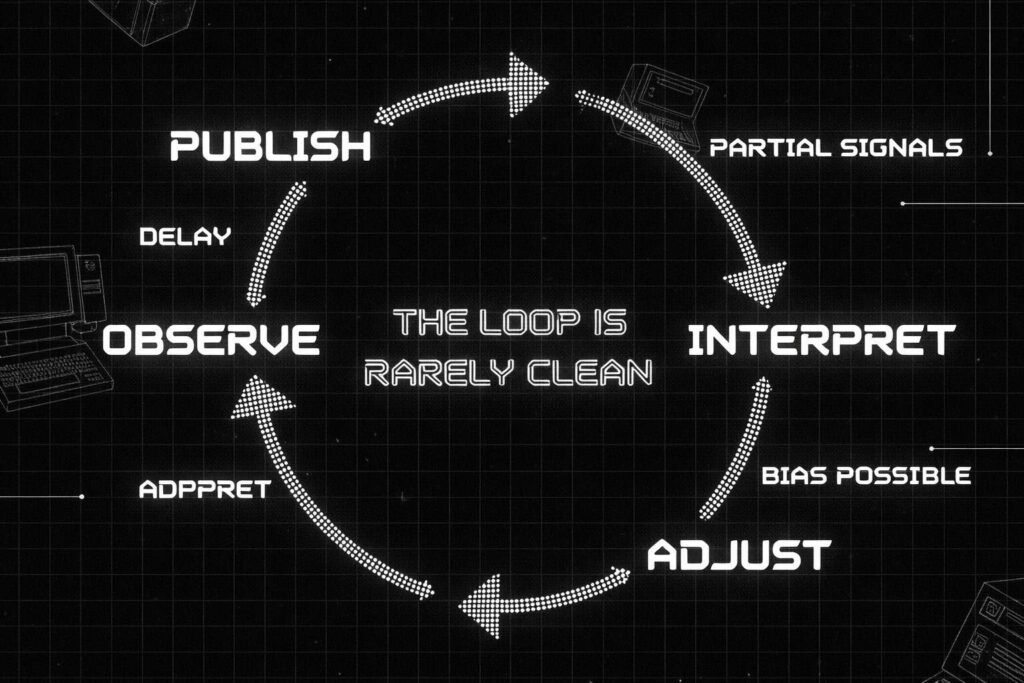

Basic loop workflow

Stage | System Activity | Typical Constraint |

Publish | Content enters indexable space | Discoverability uncertainty |

Observe | Interaction signals accumulate | Data incompleteness |

Interpret | Meaning assigned to signals | Bias or misreading |

Adjust | Structural or topical change | Delayed validation |

Iteration alone does not confirm loop presence. Publishing faster does not create meaningful signal flow. Many content pipelines appear cyclical but operate without measurable feedback.

For example, visibility absence discussed in

Why AI Blogs Get Stuck at Zero Impressions illustrates situations where iteration occurs without system testing.

Why Feedback Loops Are Framed as Performance Drivers

Industry narratives often associate loops with improvement because several patterns are frequently observed:

- Shorter response time between action and observation

- Faster detection of mismatched intent

- Progressive refinement of topical coverage

These associations occur under certain conditions. They are not universal outcomes.

The reasoning tends to rely on analogies drawn from:

- skill learning cycles

- interface rendering feedback

- model response tuning

These comparisons highlight iteration density but usually omit deeper structural dependencies.

Important distinction

- Faster loops increase adaptation opportunity

- They do not ensure adaptation accuracy

Signal quality, interpretation accuracy, and ecosystem constraints play equally important roles.

Structural Constraints That Shape Loop Behavior

Feedback loops inside content ecosystems do not operate in isolation. They exist within layered systems where data collection, interpretation, and reinforcement are influenced by limitations that are not always visible to the publisher. Understanding these constraints helps explain why iteration sometimes produces little or inconsistent change.

Signal ambiguity

Interaction data rarely carries a single meaning. A long page visit might indicate deep engagement, confusion, comparison behavior, or simply passive reading without intent alignment. Scroll depth and dwell time can reflect attention, but they can also reflect hesitation or information mismatch.

Search systems interpret patterns probabilistically, not definitively. When ambiguous signals accumulate, optimization decisions based on surface interpretation can lead content away from relevance rather than toward it. This ambiguity is particularly noticeable in AI generated environments where language quality masks topical mismatch.

Noise amplification

Content ecosystems generate large volumes of weak signals. Minor engagement spikes, partial keyword alignment, or incidental traffic can appear meaningful when observed in isolation. Repeated adjustment based on these fragments reinforces patterns that may not represent stable user interest.

Over time, small distortions compound. Instead of refining topical clarity, the system begins adapting around noise. This often manifests as widening content scope, diluted subject positioning, or competing page signals that reduce overall authority coherence.

Partial loop closure

A fully closed loop would provide clear visibility into how content influences ranking evaluation and user satisfaction. In practice, many parts of the system remain opaque. Search engines expose aggregated metrics rather than full evaluation signals. Platform level interpretation layers remain hidden.

Because of this, publishers observe outcomes without seeing intermediate reasoning. Adjustments are made using incomplete context, which limits the precision of iteration. This partial visibility contributes to cycles where changes produce unclear or delayed feedback.

Temporal lag

Search ecosystems operate on observation windows rather than immediate response. Crawling frequency, indexing validation, ranking experimentation, and behavioral aggregation unfold over time.

This delay creates a disconnect between action and measurable response. Content may appear unchanged in performance not because adaptation failed, but because evaluation has not completed. Conversely, perceived improvements may result from earlier structural changes rather than recent edits.

Temporal lag introduces interpretation risk. When iteration speed exceeds system evaluation pace, causality becomes difficult to attribute accurately.

Interpretation bottlenecks

Even when signals are available, understanding them depends on human reasoning or model driven summaries. Cognitive bias, expectation anchoring, or tool framing influences conclusions. AI analysis tools inherit training assumptions that shape their interpretations as well.

These layers filter raw signals into narratives. Decisions based on filtered narratives can diverge from underlying system behavior. This bottleneck explains why similar data sets often lead different teams to contradictory strategic adjustments.

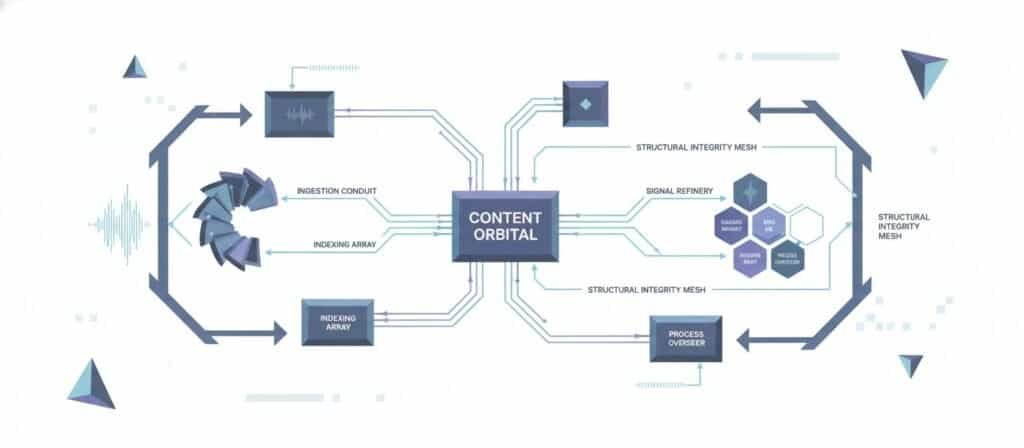

Fragmentation in Real-World Loop Construction

Observed practitioner workflows often concentrate automation upstream.

Common integration points:

- research acceleration

- topic clustering

- draft generation

Less integration appears in:

- signal modeling

- loop validation

- structural adaptation

This produces fragmented loop architecture where feedback is collected but not fully processed.

Typical pipeline structure

- Idea sourcing

- Content production

- Publication

- Surface metrics review

Missing layers frequently include:

- semantic signal mapping

- cross page reinforcement modeling

- iteration governance

Understanding automation mechanics, explored at Mechanism of AI Automation, helps contextualize why fragmentation occurs.

Failure Modes in Content Feedback Systems

Feedback loops are often presented as self-correcting mechanisms. In reality, they can degrade, stall, or redirect system behavior under specific conditions. These failure modes emerge from structural interactions rather than isolated mistakes.

Loop absence

In some environments, iteration occurs without generating meaningful response signals. This happens when content fails to reach testing thresholds, when indexing does not occur, or when topical alignment does not intersect active search demand.

The system continues publishing and adjusting, yet no behavioral reinforcement emerges. Without response signals, adaptation becomes speculative. This pattern is frequently observed in automated publishing pipelines that prioritize output volume over structural integration.

Loop distortion

Signals may exist but misrepresent underlying user intent. Metrics influenced by accidental traffic, ambiguous queries, or unrelated navigation behavior can produce misleading optimization direction.

When adaptation responds to distorted signals, content drifts away from topical coherence. Instead of strengthening authority alignment, iteration increases fragmentation. Distortion tends to develop gradually and is often difficult to detect without long-horizon observation.

Loop stagnation

A loop may operate continuously yet produce minimal system state change. Signals reinforce existing patterns without introducing variation.

This occurs when content structures repeat familiar templates, when topical expansion remains confined to narrow semantic ranges, or when user behavior stabilizes around limited engagement types. Stagnation does not present as failure but as a plateau, where activity persists without meaningful evolution.

Loop acceleration imbalance

Rapid iteration can exceed the ecosystem’s capacity to validate outcomes. Frequent structural edits, continuous publishing, or aggressive optimization cycles generate overlapping evaluation windows.

Because responses have not fully materialized, subsequent adjustments build on incomplete understanding. The loop becomes unstable, reacting faster than signals can mature. This imbalance creates oscillating performance patterns rather than progressive refinement.

Dependency collapse

Feedback loops often rely on external signal sources such as search exposure, referral traffic, or ecosystem trends. When these sources shift or decline, reinforcement patterns weaken or disappear.

Content systems built on narrow dependency channels can experience abrupt behavioral change. Without diversified signal input, adaptation mechanisms lose orientation. Collapse here does not indicate internal malfunction but environmental transition.

Implications for Visibility Understanding

Search exposure emerges from interaction between:

- content structure

- topical reinforcement

- system evaluation

- competing ecosystem signals

Feedback loops influence visibility but do not control it directly.

This perspective shifts emphasis from optimization narratives toward systemic interaction awareness. Visibility becomes an emergent property rather than a deterministic outcome.

If your site is publishing consistently but authority isn’t compounding, this breakdown explains why.

Read the full analysis here:

Why Autoblogging Destroys Topical Authority Over Time

Frequently Asked Questions

What is the 30% rule in AI?

The “30% rule” in AI is not an established technical principle. It is an informal idea suggesting AI contributes part of the output while human judgment completes and validates it. Because workflows differ widely, no fixed percentage guideline is recognized. It should be viewed as conversational shorthand rather than a defined methodology.

Is AI content ok for SEO?

AI generated content can be acceptable for search visibility when it is relevant, useful, and structurally aligned with user intent. Performance depends on integration within a coherent content system rather than the use of AI alone. Issues typically arise from duplication, shallow topical coverage, or weak structural signals.

What is the 10 20 70 rule for AI?

The “10 20 70 rule” is an informal workflow concept, not a standardized model. It usually describes effort distribution across planning, configuration, and refinement, emphasizing that most progress occurs during iteration and adjustment. The ratio varies by context and should be treated as a conceptual reminder rather than a fixed rule.

What is a feedback loop in AI?

A feedback loop in AI is a process where outputs generate signals that influence future behavior. This involves producing results, observing responses, interpreting signals, and adjusting actions accordingly. In content systems, these loops shape adaptation based on interaction and system evaluation patterns.

References and Further Reading

- Google Search Central—Creating helpful content: https://developers.google.com/search/docs/fundamentals/creating-helpful-content

- Google Search Central—How Search Works: https://developers.google.com/search/docs/fundamentals/how-search-works

- Google Search—How ranking results are determined: https://www.google.com/search/howsearchworks/how-search-works/ranking-results/

- Stanford Information Retrieval Book—Web crawling and indexing: https://nlp.stanford.edu/IR-book/html/htmledition/web-crawling-and-indexing-1.html

- Language Models are Few-Shot Learners (GPT-3 paper, arXiv): https://arxiv.org/abs/2005.14165

Conclusion

The AI Content Feedback Loop (SEO) is commonly described as a cycle of creation, measurement, and refinement. A closer examination reveals a more complex structure shaped by signal ambiguity, interpretation limits, and ecosystem dynamics.

Iteration contributes to adaptation only when signals are interpretable and structural alignment supports reinforcement. Fragmented loops, distorted feedback, or delayed validation often explain why automated content ecosystems behave unpredictably.

Understanding these interactions provides a grounded lens for evaluating content performance and long-term visibility behavior within the AI Content Feedback Loop (SEO).

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn