AI Writing vs Content Systems

Quick Answer

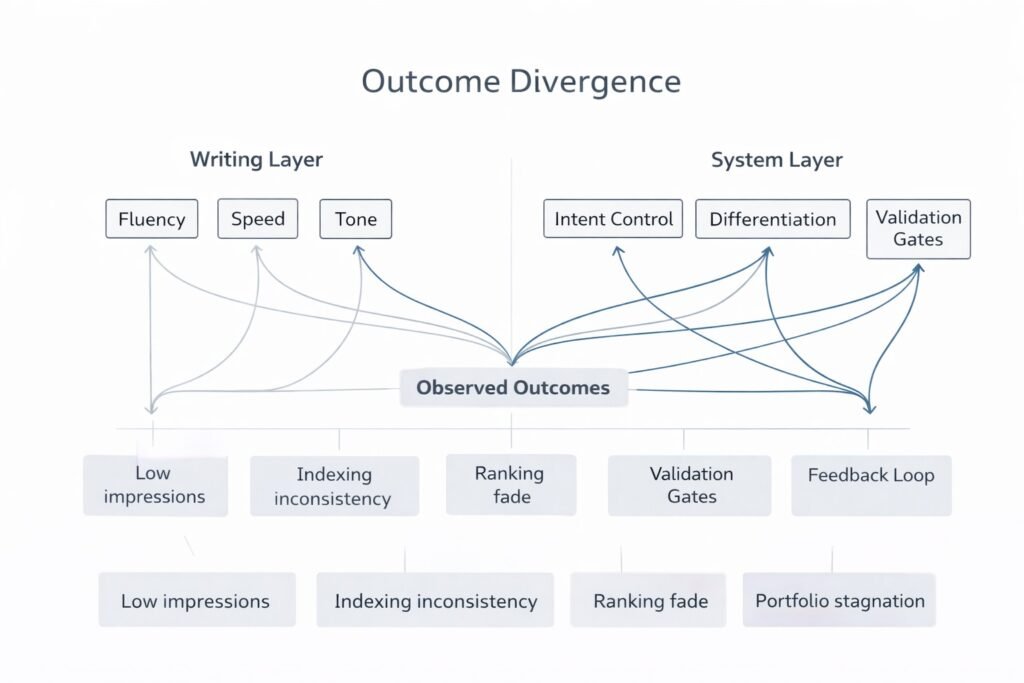

AI Writing vs Content Systems describes two very different layers of digital publishing. AI writing produces text outputs from prompts, while content systems coordinate intent, structure, validation, and feedback across many pages. Performance outcomes often diverge because writing generates artifacts, but systems shape how those artifacts interact with search signals, indexing behavior, and authority accumulation.

Why This Comparison Matters

Many builders assume that producing readable text leads directly to visibility or growth. That assumption usually forms when AI tools generate clean paragraphs quickly and consistently.

In practice, confusion appears later:

- Pages exist but gain no impressions

- Articles remain indexed yet ignored

- Rankings appear briefly and fade

- Content portfolios feel active but stagnant

These outcomes are commonly associated with structural conditions rather than writing quality alone.

If you have seen similar patterns, you may recognize them from analyses discussing:

- how AI websites fail after launch

- why AI blogs get stuck at zero impressions

- AI content sites getting no index after publishing

- Why do AI websites stop ranking

- how AI content automation works in practical

- AI Content Feedback Loop (SEO)

Each of those reflects system behavior, not writing failure.

AI Writing vs Content Systems Explained at a Structural Level

AI Writing Operates as a Function

AI writing performs a focused task:

- transforms prompts into language

- predicts patterns from training data

- produces coherent text sequences

- optimizes for readability and tone

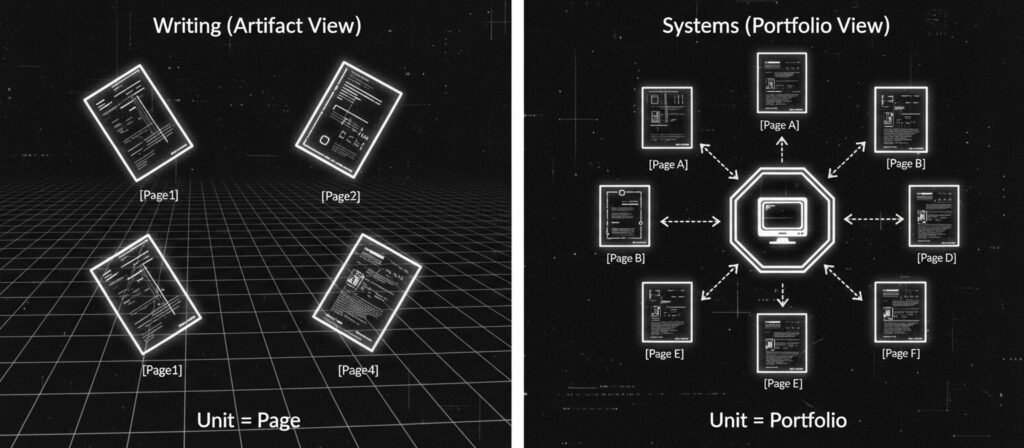

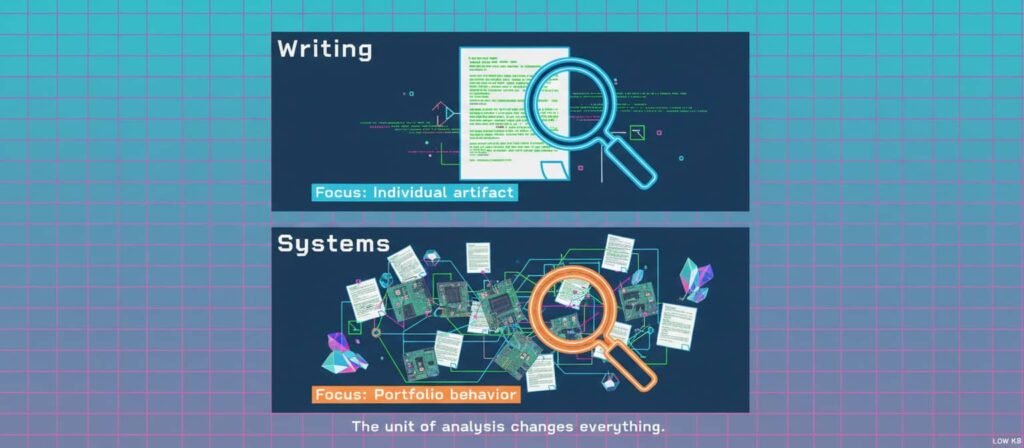

Its unit of work is a single artifact, such as

- an article

- a paragraph

- a caption

- a description

Success tends to be judged by:

- fluency

- clarity

- speed

- grammatical accuracy

This layer does not inherently manage:

- topical positioning

- portfolio relationships

- signal reinforcement

- publication timing

Those sit outside its operational scope.

Content Systems Operate as Environments

Content systems coordinate multiple stages:

- intent assignment

- structural placement

- validation gates

- distribution decisions

- performance feedback

The unit of work becomes a portfolio rather than a page.

Evaluation tends to consider:

- differentiation between pages

- interaction across topics

- reinforcement signals

- long-term consistency

This coordination role influences how content interacts with:

- search indexing pipelines

- ranking experimentation

- relevance interpretation

Structural Contrast Table

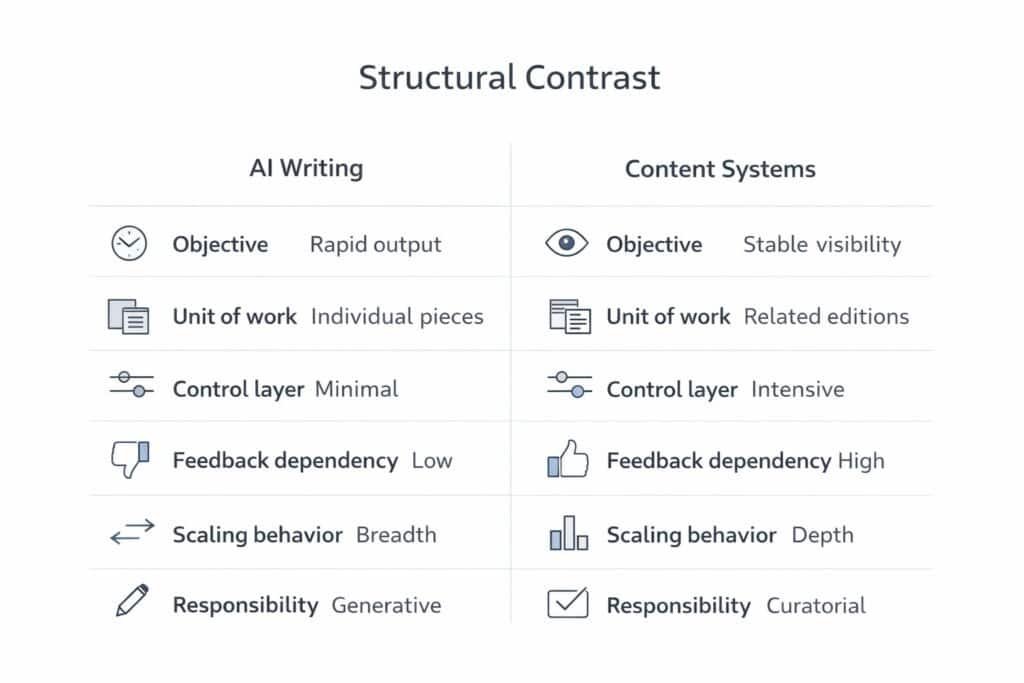

Dimension | AI Writing | Content Systems |

Primary objective | Generate text output | Coordinate publishing environment |

Unit of work | Individual artifact | Portfolio behavior |

Control layer | Prompt inputs | Structural orchestration |

Constraint model | Language probability | Intent and role boundaries |

Feedback dependency | Minimal | Continuous |

Typical failure mode | Generic tone | Signal confusion |

Scaling behavior | Volume expansion | Relationship management |

Search visibility impact | Indirect | Structural |

Responsibility assignment | Local output quality | Lifecycle outcomes |

Mechanisms That Separate Outcomes

Control Layer Differences

Writing tools influence language tokens.

Systems influence placement, timing, and role assignment.

When orchestration is absent:

- output placement becomes random

- overlap increases

- signals weaken

Containment and Drift

Pattern generation often averages common structures.

Without boundaries:

- tone homogenizes

- topical edges blur

- differentiation weakens

Containment mechanisms in systems attempt to preserve clarity across pages.

Feedback Integration

Search interaction signals arrive later:

- impressions

- clicks

- dwell patterns

Writing does not process these signals.

Systems often incorporate them into iteration.

This distinction tends to shape long-term behavior.

Differentiation Pressure

Portfolio similarity frequently correlates with:

- intent overlap

- keyword competition

- authority fragmentation

Writing volume may unintentionally amplify similarity.

Systems attempt to moderate distribution.

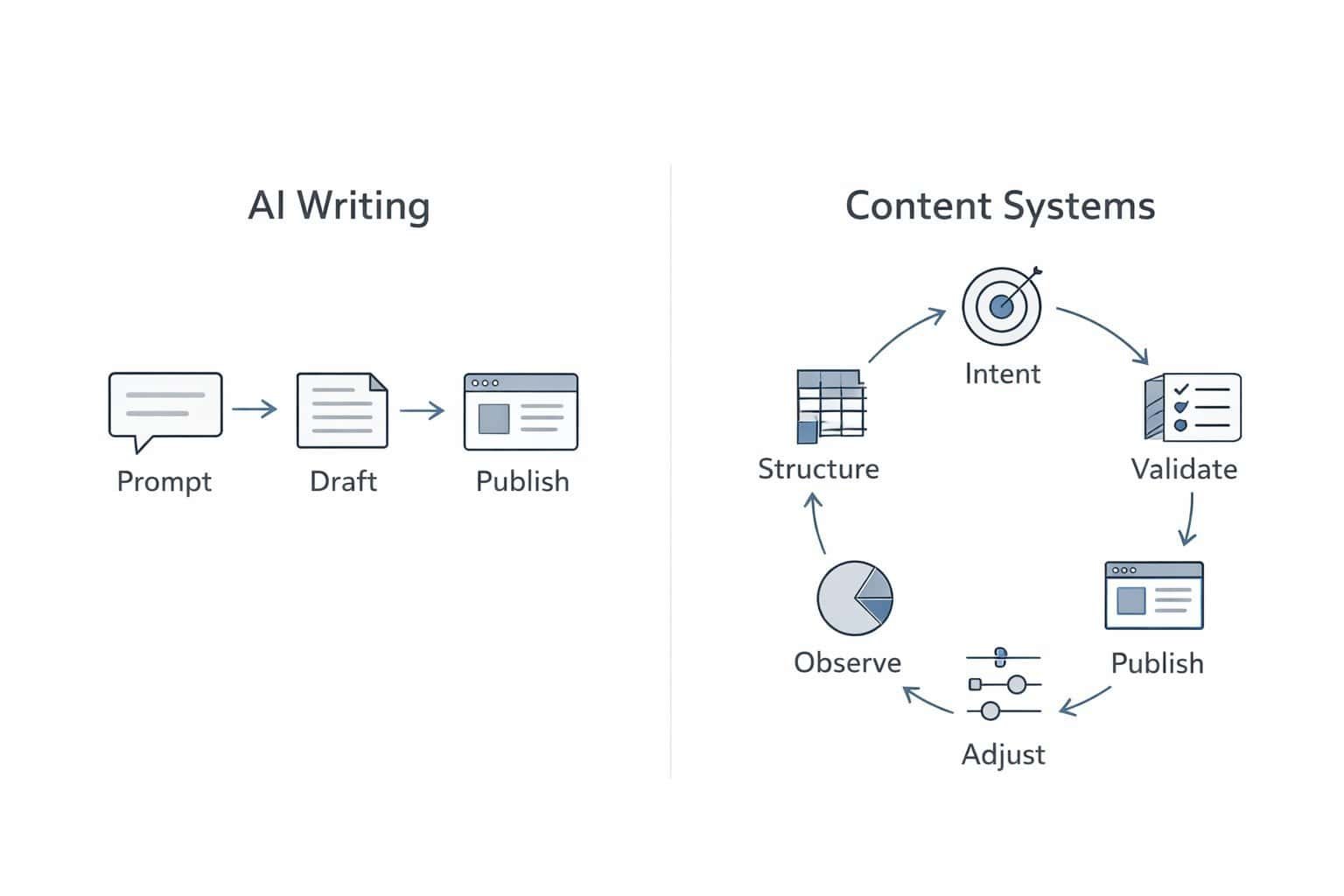

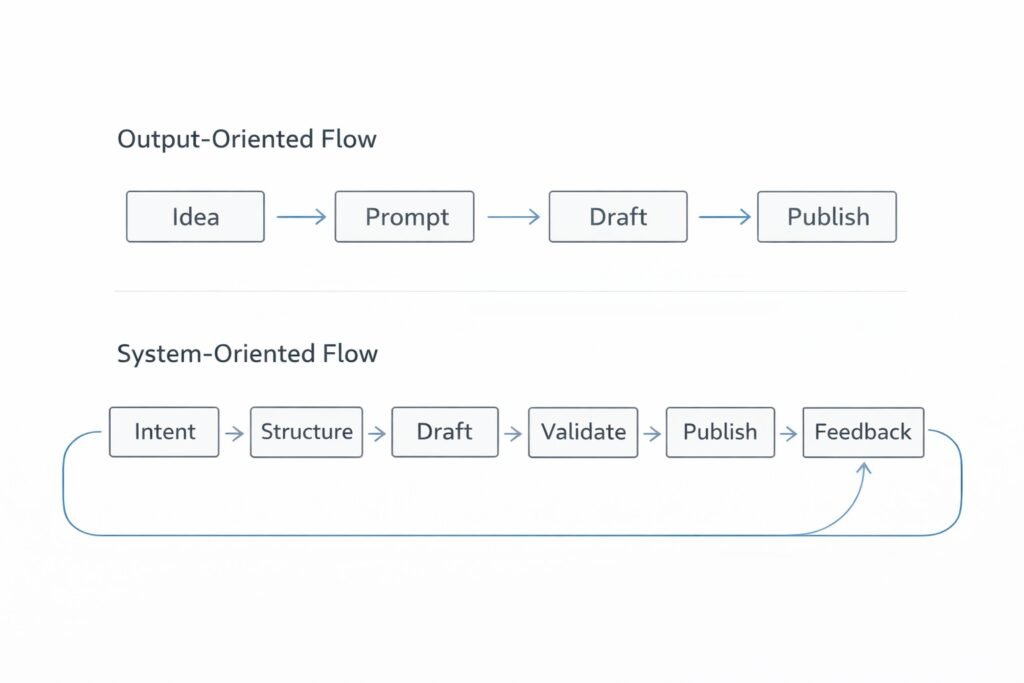

Workflow Contrast

Output-Oriented Flow

Idea

→ Prompt

→ Draft

→ Publish

System-Oriented Flow

Intent Assignment

→ Structural Mapping

→ Draft Generation

→ Validation Gate

→ Publication

→ Feedback Observation

→ Iterative Adjustment

What This Page Does Not Cover

To preserve role clarity, this page does not discuss:

- tool comparisons

- workflow setup guides

- SEO tactics or keyword lists

- platform recommendations

Those topics belong in evaluation or system implementation layers.

Implication Shift

AI Writing vs. Content Systems highlights a useful mental shift.

Text generation alone rarely defines digital performance.

Observed outcomes tend to emerge where:

- orchestration is absent

- feedback loops are missing

- structural intent is unclear

Writing produces pages.

Systems shape interactions between pages.

This distinction often reframes how builders interpret stagnation.

Next Step

If you want to understand the mechanics behind automated publishing environments, continue with the analysis explaining how content automation operates at a system-level scale.

Frequently Asked Questions:

What is the difference between AI and a content writer?

AI generates language patterns from data inputs, while human writers contribute contextual judgment, interpretation, and experiential framing. In many environments both operate together, with AI assisting production and humans shaping direction.

Can AI replace content writing?

Replacement outcomes vary across contexts. AI often assists drafting or ideation, yet editorial oversight, narrative direction, and contextual sensitivity remain associated with human involvement in many workflows.

What is the 30% rule in AI?

Interpretations differ. The phrase is sometimes used informally to describe maintaining human contribution within AI-assisted outputs. It is not a standardized industry rule and lacks universal definition.

Is content writing still in demand after AI?

Demand patterns shift rather than disappear. Environments that value strategy, differentiation, and interpretation often still require human contribution, especially where coordination across portfolios matters.

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn