Quick Answer

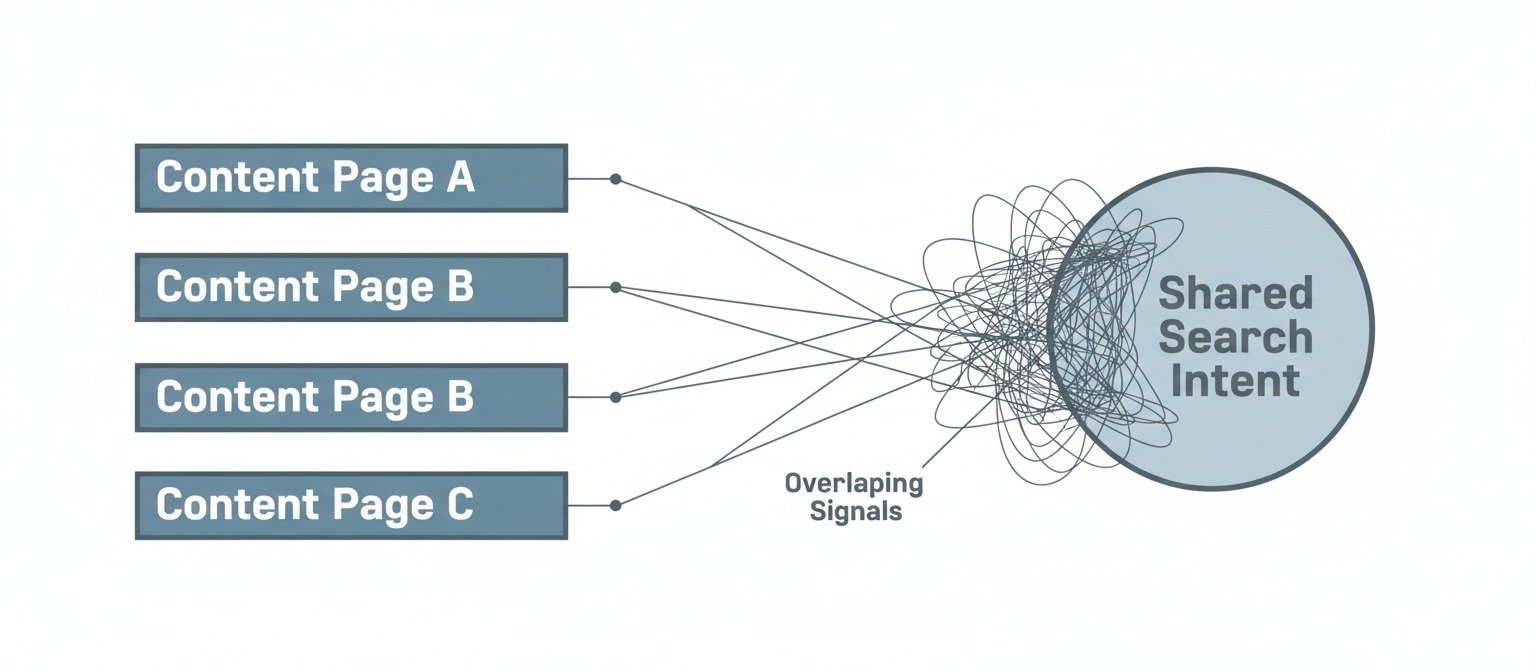

AI content cannibalization issues occur when multiple AI-generated pages unintentionally target the same search intent, causing authority to split instead of consolidate. This problem usually starts at the system and structure level, not at the keyword level. As content scales, overlap grows quietly and visibility becomes unstable.

AI Content Cannibalization Issues

AI Content Cannibalization Issues often appear when websites keep publishing content, but visibility and authority never settle in one place. Pages get indexed. Articles go live. Traffic graphs stay flat or become unstable over time.

Most people view this as a keyword problem. Field experience suggests something else. AI content cannibalization issues usually start before keywords become a problem. They originate inside the publishing system itself, not only inside search results.

This article explains AI content cannibalization issues as a mechanism-level system behavior, not as a simple SEO mistake.

What Content Cannibalization Is Commonly Assumed to Be

Most explanations describe content cannibalization in familiar terms:

- Multiple pages target the same keyword

- Rankings fluctuate

- Search engines cannot choose a clear winner

- Authority gets divided across URLs

This description is not wrong. It is incomplete.

It describes what happens, not why it happens.

That is why many AI-driven websites continue publishing after launch but never reach stable growth. This pattern appears even on technically sound sites. We observed similar behavior while analyzing why AI websites fail after launch despite consistent publishing.

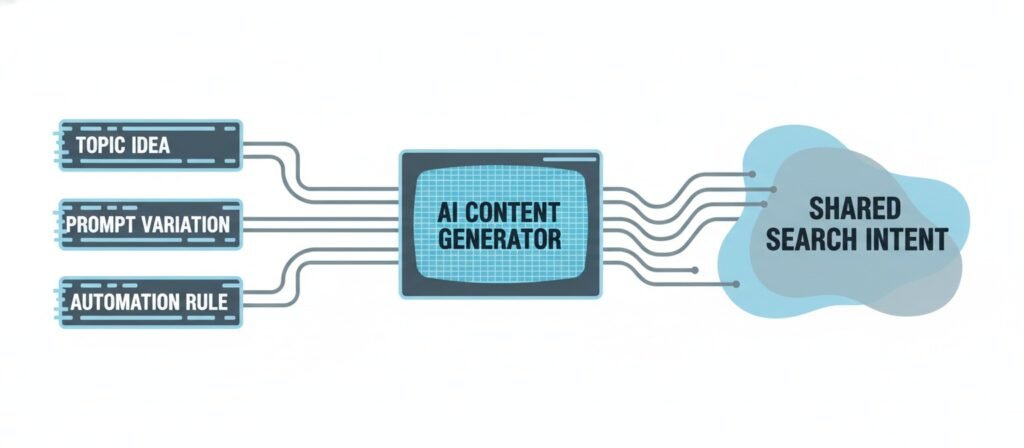

Why AI-Generated Content Is More Prone to Cannibalization

AI content cannibalization issues are more common in systems with heavy automation. AI tools treat each prompt as a new opportunity. The system usually has no awareness of:

- Which intent is already covered

- Which page owns which role

- Where internal overlap already exists

AI writes. The system does not decide.

This difference becomes clearer when separating AI writing vs. content systems. Writing produces text. Systems allocate intent and authority.

When intent allocation is missing, cannibalization becomes likely.

The Intent Replication Mechanism

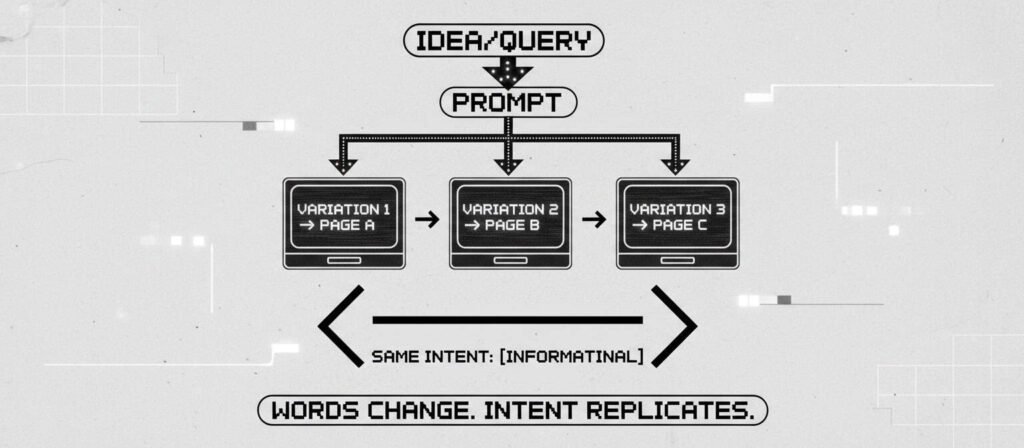

The core driver behind AI content cannibalization issues is intent replication.

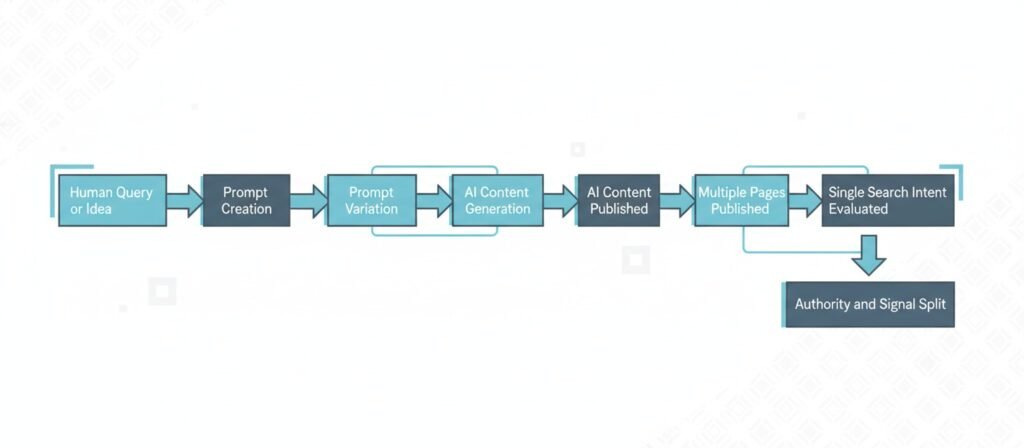

This workflow appears repeatedly across AI publishing systems:

- A human idea or query is identified

- The idea is converted into a prompt

- Prompt variations are created

- AI generates a new page for each variation

The wording changes.

The intent usually does not.

What actually replicates

Layer | Changes | Stays the same |

Words | Yes | No |

Structure | Sometimes | Often |

Search intent | No | Always |

User expectation | No | Always |

Cannibalization starts here. Before ranking. Before indexing.

This explains why many AI blogs remain stuck at zero impressions even when the content looks fine.

Intent vs Page Role Collision

Multiple pages appear distinct at the topic level but collapse into the same search intent at evaluation time.

| Page URL | Primary Topic | Intended Role | Actual Search Intent | Collision Type |

|---|---|---|---|---|

| Page A | AI content scaling | Explainer | Informational | Direct overlap |

| Page B | AI content issues | Analysis | Informational | Role duplication |

| Page C | AI SEO problems | Overview | Informational | Intent convergence |

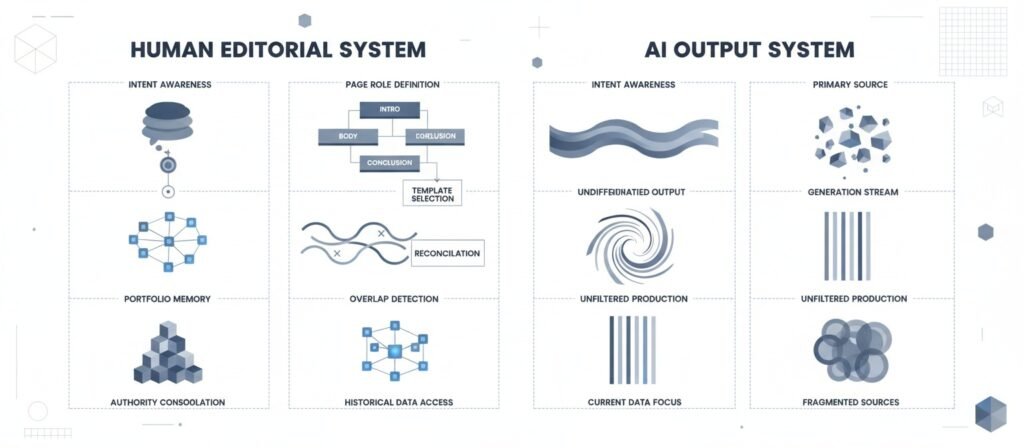

Structural Blindness in Automated Content Systems

Most AI publishing pipelines miss one critical layer.

Structural awareness.

These systems often lack:

- A global intent map

- Defined page roles

- Exclusion rules

- Conflict detection

Human editors sense overlap naturally. Automated systems require explicit structure.

When this layer is missing, the system cannot evaluate its own output. The content portfolio slowly fills with overlapping pages.

This same blindness appears in cases where AI content sites fail to index after publishing.

Authority Dilution as a System Outcome

A visible outcome of AI content cannibalization issues is authority dilution.

Search engines evaluate relevance comparatively, not in isolation.

When multiple pages send the same intent signal:

- Authority weight spreads evenly

- No single page becomes dominant

- Signals fail to consolidate

Common outcomes include:

- Rotating rankings

- Multiple URLs swapping positions

- Lack of long-term stability

This pattern appears frequently in autoblogging environments, which is why we previously observed how autoblogging destroys topical authority over time.

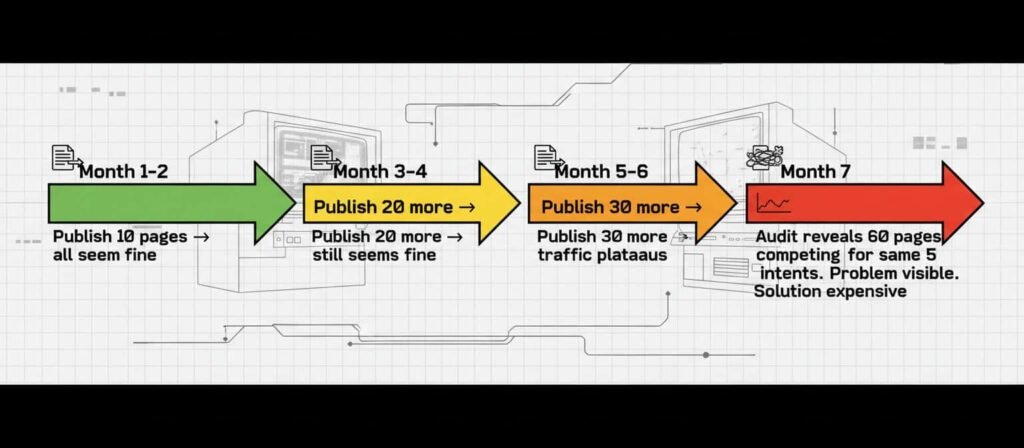

Why Cannibalization Often Appears Late

AI content cannibalization issues are rarely detected early.

In the initial phase:

- Content volume is low

- Competition is limited

- Signals are weak

Everything appears fine.

As content scales:

- Internal competition increases

- Search signals become ambiguous

- Priority resolution fails

Monitoring tools usually flag the issue after the damage has already accumulated. This creates the illusion of a sudden problem.

Model Self-Cannibalization vs Content Cannibalization

A clear boundary matters here.

Model self-cannibalization and AI content cannibalization issues are related concepts, but they operate at different layers.

Aspect | Model collapse | Content cannibalization |

Layer | Training data | Publishing system |

Actor | AI model | Website |

Because | Recursive training | Intent overlap |

Outcome | Meaning loss | Authority split |

- A collapsing model does not mean your site is cannibalizing content.

- A cannibalizing site does not mean the AI model is failing.

Confusing these layers leads to incorrect diagnosis.

What This Article Does Not Cover

To maintain clarity, this article intentionally does not cover:

- Step-by-step fixes or optimization tactics

- Keyword mapping tutorials

- Tool-based audits or dashboards

- Redirect strategies or consolidation workflows

- AI model training or dataset contamination

Those topics address execution.

This article focuses only on system behavior and structural causes.

AI Content Cannibalization Issues as a Design Artifact

AI content cannibalization issues are rarely accidental. They are a predictable outcome of system design.

When a publishing system lacks:

- Intent governance

- Clear page roles

- Portfolio-level awareness

Overlap becomes inevitable.

AI accelerates the process.

The root problem exists before automation.

This broader behavior connects directly with how AI content automation actually works and how feedback signals distort over time, as shown in the AI content feedback loop in SEO.

Final observation:

Where intent is unmanaged, AI content cannibalization issues naturally emerge.

People Also Ask:

What is the problem with AI-generated content?

The most common problems show up when AI output is published at scale without strong editorial structure. You often see overlap between pages, weak differentiation, shallow sourcing, and inconsistent factual reliability. Over time, this can reduce trust signals and make it harder for search systems to treat any one page as the clear best answer.

What is content cannibalization?

Content cannibalization happens when multiple pages on the same site compete for the same search intent. Instead of one strong page accumulating signals, several similar pages split relevance, links, and engagement. The result is often unstable rankings, rotating URLs, or weaker overall visibility.

What is the 30% rule in AI?

There is no single, universal “30% rule in AI” that is broadly accepted across the industry. People use “30%” in different contexts, such as internal editorial heuristics, compliance thresholds, or content change guidelines. If you saw it in a specific tool, policy, or course, the meaning depends on that source’s definition.

What are the problems with AI content moderation?

AI moderation can struggle with context, sarcasm, regional language, and fast-changing cultural references. It may also produce false positives, false negatives, and inconsistent enforcement across similar cases. These issues become more visible when moderation decisions are automated at high volume without clear escalation and review paths.

References

Google Search Central

Creating helpful, reliable, people-first contentGoogle Search Central

Duplicate content guidanceMoz

Keyword Cannibalization: What It Is and How to Fix ItYoast

Keyword and content cannibalization: how to identify and fix itNightwatch

What Is Content Cannibalization and How to Fix ItGrowth Memo – Kevin Indig

Solving Keyword Cannibalization with AIGoogle Search Central Blog

Understanding page purpose and search intentTechTarget

AI cannibalism explained: a model failureThe Week

AI is cannibalizing itself. And creating more AI.

These sources are provided to validate observed patterns around content overlap, intent ambiguity, authority dilution, and recursive system behavior.

They support the analysis without defining or constraining the system-level interpretation presented in this article.

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn