Signs an AI Platform Is Overselling

Quick Answer

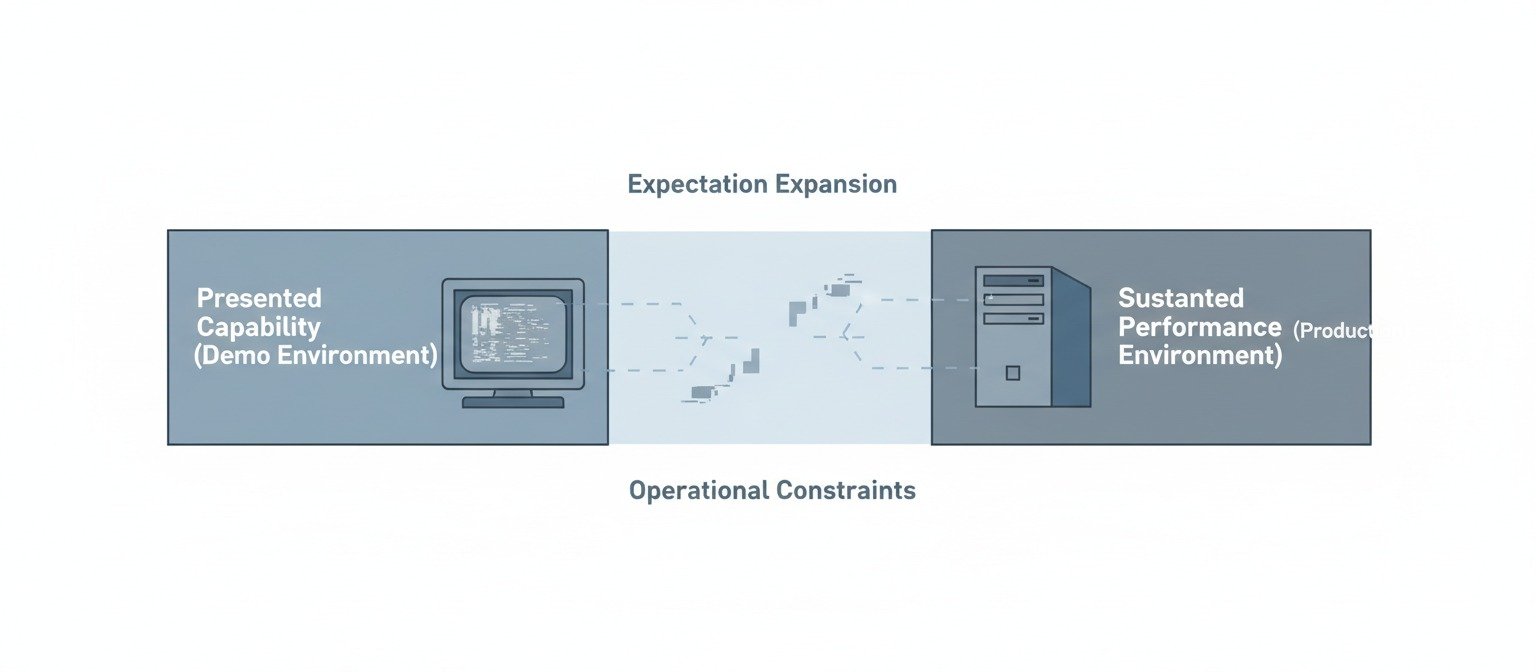

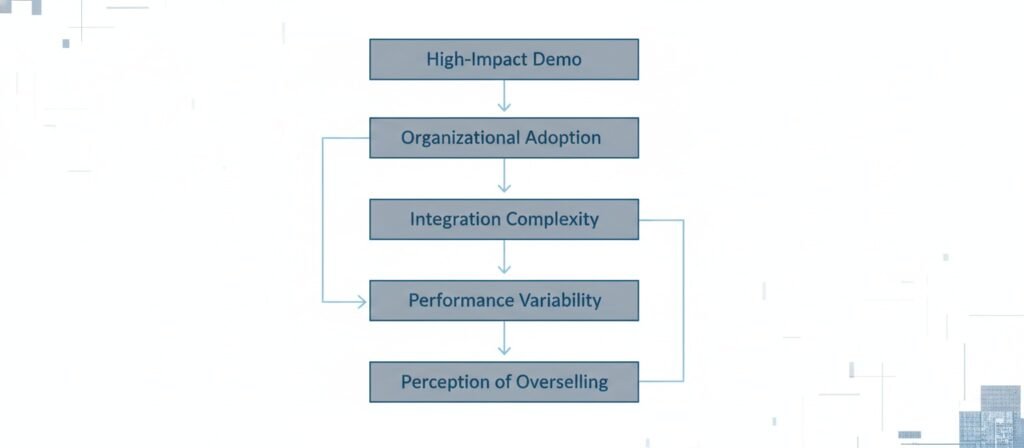

An AI platform is often perceived as overselling when there is a widening gap between its presented capabilities and its sustained real-world performance. This gap rarely emerges from a single misleading claim. Instead, it tends to arise from structural pressures within AI markets: rapid innovation cycles, venture-backed growth expectations, curated demonstration environments, category ambiguity, and incomplete feedback loops.

Incentives, Constraints, and Expectation Drift

In many cases, what appears as exaggeration is a predictable byproduct of incentive alignment. Marketing emphasizes peak performance under controlled conditions, while production environments introduce data variability, integration friction, and organizational constraints.

As expectations compound faster than operational stability, perception diverges from capability. The “signs” of overselling are therefore not merely red flags in messaging—they are observable symptoms of systemic tension between narrative acceleration and technical maturation.

This systemic tension explains why so many sites experience the post-launch decline we documented in why AI websites fail after launch—narrative promised durability, but technical maturation wasn’t complete.

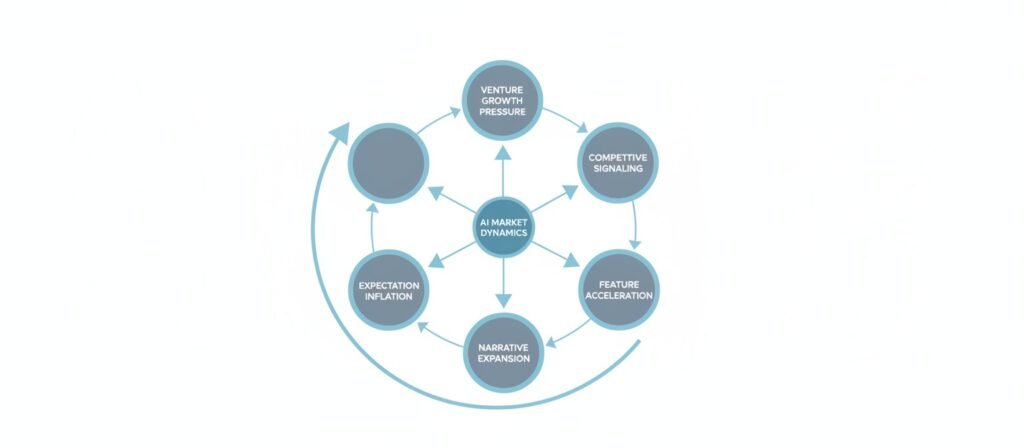

Incentive Structures in AI Markets

Venture Pressure and Growth Narratives

AI markets operate under unusually high expectations. Investment cycles often reward category leadership, speed of adoption, and perceived technological edge. In such environments, platforms are incentivized to frame their capabilities in forward-looking terms.

When funding, valuation, and competitive positioning depend on projected impact rather than mature infrastructure, messaging can lean toward potential rather than operational reliability.

This forward-leaning messaging directly affects whether you should trust AI website builders—trust requires stability, not just confidence.

This does not necessarily imply deliberate misrepresentation. It reflects a system in which narrative momentum often precedes technical consolidation.

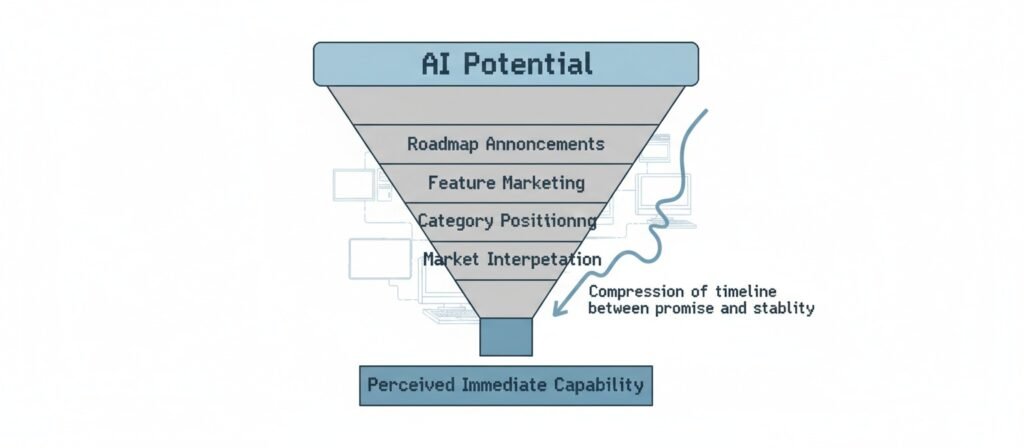

Category Creation and Label Elasticity

The term “AI platform” has become elastic. It can describe a foundational model provider, a workflow automation layer, a domain-specific interface, or a repackaged API with added controls. The boundaries are fluid.

In fluid categories, differentiation frequently occurs through language rather than architecture. Claims expand to occupy emerging market space. As labeling becomes broader, so does the surface area for perceived overselling. The more ambiguous the category, the easier it becomes for expectations to outpace constraints.

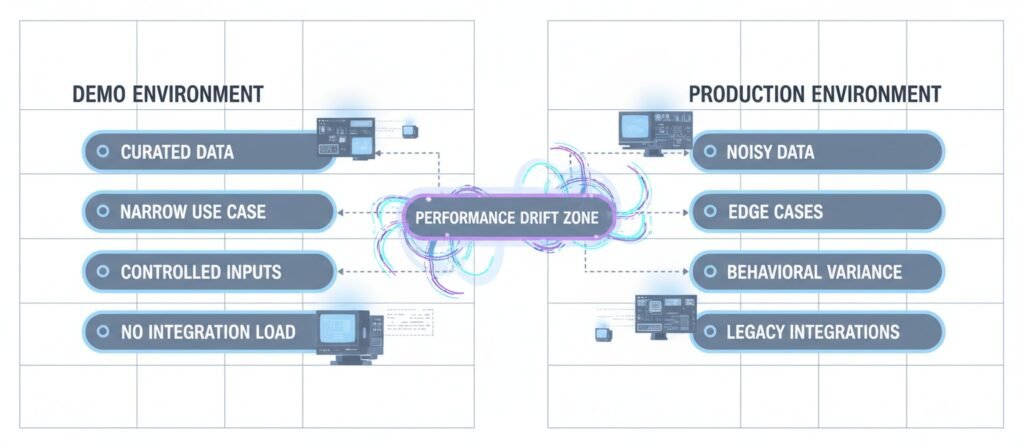

The Demo–Production Divergence

One of the most consistent signs of overselling appears in the transition from demonstration to deployment.

Controlled Inputs vs. Real-World Variability

Demonstrations typically rely on structured inputs, curated datasets, and constrained scenarios. These environments highlight best-case behavior. Real-world environments, by contrast, introduce noisy data, edge cases, inconsistent formatting, and legacy integrations.

AI systems, especially those dependent on probabilistic modeling, can degrade when exposed to input distributions that differ from their demonstration framing. What appears seamless in a controlled setting may require ongoing adjustment in production.

This degradation explains why AI content sites getting no-index after publishing often worked perfectly in demo environments—the production web introduced variability the demo never accounted for.

Narrow Use Case Framing

Demos often illustrate a narrow slice of functionality. They focus on specific, optimized workflows. Once deployed, users frequently attempt broader or adjacent use cases.

Performance may remain stable within the original frame but weaken outside it. The resulting gap can feel like an overstatement, even when the original demonstration was technically accurate within its constraints.

Integration and Workflow Friction

Enterprise environments introduce integration complexity: data pipelines, compliance requirements, internal approval processes, and cross-system dependencies. These layers are rarely visible in promotional material.

An AI platform may perform adequately in isolation yet encounter latency, governance, or compatibility issues once embedded into organizational systems. Overselling perception increases when integration cost was implicitly minimized in earlier narratives.

These compatibility issues are precisely the structural constraints we mapped in AI Website Builder Risks—platforms control the demo, but organizations inherit the integration friction.

Narrative Inflation and Competitive Signaling

Feature Acceleration Dynamics

In competitive markets, platforms continuously add features to maintain visibility. Roadmaps become part of marketing. Announced capabilities may still be stabilizing internally while being publicly positioned as core functionality.

The cadence of feature announcements can outpace validation cycles. As a result, platforms appear more mature externally than their operational depth would suggest.

This appearance of maturity without depth is what vendors don’t tell you about AI automation—they announce what’s possible, not what’s stable

Wrapper Ecosystems and API Arbitrage

A growing segment of AI products function as orchestration layers over foundational models. These “wrapper” systems may add interface improvements, domain-specific tuning, or workflow integration.

While such additions can be valuable, marketing language sometimes implies proprietary intelligence where the underlying engine is externally sourced. When users later discover model dependency or capability limits, the perceived difference between platform and foundation may shrink. This can reinforce impressions of inflation.

This perceived shrinkage is a key reason why AI writing vs. content systems matters—wrappers may generate text, but the underlying system constraints remain unchanged.

Black-Box Advantage and Transparency Limits

Opacity as Competitive Positioning

Advanced AI systems often operate as black boxes. Full explainability may be technically difficult or strategically undesirable. Limited transparency can function as a competitive moat, preventing easy replication.

However, opacity also reduces the user’s ability to diagnose failure modes. When outcomes fluctuate without clear traceability, confidence can erode. What might be a predictable probabilistic limitation becomes interpreted as inconsistency.

Without transparency, site owners can’t identify whether problems stem from content, infrastructure, or platform behavior—which is why our question framework focuses on what you can actually verify.

Risk Distribution and Responsibility Ambiguity

When AI outputs influence operational decisions, questions of accountability arise. If the reasoning pathway is unclear, risk shifts to the adopting organization.

Platforms may emphasize accuracy metrics without detailing variance ranges, boundary conditions, or known instability zones. In such cases, overselling perception can emerge not from false claims, but from incomplete articulation of constraints.

ROI Deferral and Expectation Compression

Data Preparation and Training Lag

AI deployment frequently requires data alignment, fine-tuning, validation cycles, and user calibration. These processes can be slower than anticipated.

Marketing materials often emphasize end-state efficiency. The transitional phase—during which performance may be inconsistent—receives less attention. As immediate return fails to materialize, confidence can weaken.

This transitional inconsistency is structurally similar to the patterns we observed in why automated content doesn’t compound—value appears deferred because the system isn’t yet aligned with its environment.

Workflow Disruption and Adoption Friction

AI systems rarely operate independently of human processes. Adoption involves behavioral change, retraining, and adjustment of oversight structures.

If these adaptation costs were underestimated in messaging, perceived overselling increases. The platform may function as designed, yet the organizational system surrounding it may not yet be optimized to extract value.

Metric Ambiguity

Measuring AI performance is not always straightforward. Improvements may be probabilistic, distributed, or context-dependent.

When benefits cannot be easily isolated from other operational variables, ROI may appear deferred or abstract. In high-expectation environments, delayed measurability can be interpreted as an exaggerated promise.

Overselling as a System Outcome

Information Asymmetry

AI platforms typically possess deeper technical knowledge of model behavior than buyers. This asymmetry can widen the interpretation gap between marketing language and operational constraints.

Even accurate statements can be interpreted expansively by audiences lacking full context. Over time, the cumulative effect resembles exaggeration.

Feedback Loop Lag

Rapid iteration cycles mean that products evolve continuously. Marketing narratives may reflect anticipated stability, while real-world usage reveals transitional instability.

When feedback loops are slower than narrative expansion, overselling becomes structurally likely. The system produces expectation faster than validation.

Competitive Signaling Under Uncertainty

In early-stage markets, no actor possesses complete certainty regarding long-term performance boundaries. Platforms signal confidence to remain competitive. Buyers interpret signals as assurance.

This dynamic does not require deception to generate overselling perceptions. It requires only accelerated signaling within uncertain technological terrain.

Understanding overselling as a system outcome helps clarify when AI website builders actually make sense—and when they’re being applied beyond their structural limits.

Conclusion

The signs that an AI platform is overselling often reflect deeper structural dynamics rather than isolated misstatements. Venture growth pressure, category ambiguity, curated demonstrations, opacity, and delayed ROI measurement interact to create expectation asymmetry.

In fast-moving innovation markets, narrative velocity frequently exceeds operational consolidation. The resulting perception gap—between what appears possible and what proves stable—becomes interpreted as overselling.

Understanding this pattern at a system level clarifies that overselling is often an emergent property of incentive alignment, information asymmetry, and competitive acceleration. It is less a matter of individual intent and more a feature of how AI markets currently evolve.

Frequently Asked Questions

Is AI being overhyped?

AI is often described in terms of transformative potential, which can create elevated expectations. In early innovation cycles, optimism frequently outpaces institutional maturity. While many AI systems demonstrate meaningful capability, public narratives sometimes compress timelines for reliability and scalability. This does not invalidate the technology, but it can amplify the perception that current implementations should already match projected long-term performance.

What is overconfidence in AI?

Overconfidence in AI refers to placing excessive trust in system outputs without accounting for probabilistic error, boundary conditions, or contextual variability. Because AI systems can produce fluent and authoritative responses, users may infer reliability beyond validated domains. Overconfidence typically emerges when performance in controlled scenarios is generalized to broader or less predictable environments.

Why do many AI projects fail?

AI projects often encounter friction in data preparation, integration, governance, and organizational adaptation. Technical feasibility does not automatically translate into operational stability. Projects may stall when implementation complexity, stakeholder alignment, or measurement clarity was underestimated. Failure in this context usually reflects system misalignment rather than technological impossibility.

Are companies overselling AI?

Some companies may overstate readiness or minimize constraints, but in many cases perceived overselling arises from incentive structures. Competitive markets reward bold positioning and rapid feature signaling. When narrative expansion outpaces validation cycles, claims can appear inflated even if underlying technology has legitimate potential within defined limits.

What is AI’s biggest weakness?

A frequently observed limitation of AI systems is sensitivity to context outside trained or tuned domains. Performance can degrade when encountering edge cases, distribution shifts, or ambiguous inputs. Additionally, limited explainability in complex models can restrict diagnostic transparency. These constraints are not necessarily permanent, but they remain relevant in current implementations.

External References & Further Reading

- 1. ISACA—The Reality of AI Oversold and Underdelivered

- 2. Forbes—Spotting AI Washing: How Companies Overhype Artificial Intelligence

- 3. Gladly—Understanding AI Hallucinations in Customer Experience

- 4. U.S. Securities and Exchange Commission (SEC)

- 5. Startup Stash—10 Signs AI Hype Is Outpacing ROI for Small Businesses

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn