Quick Answer

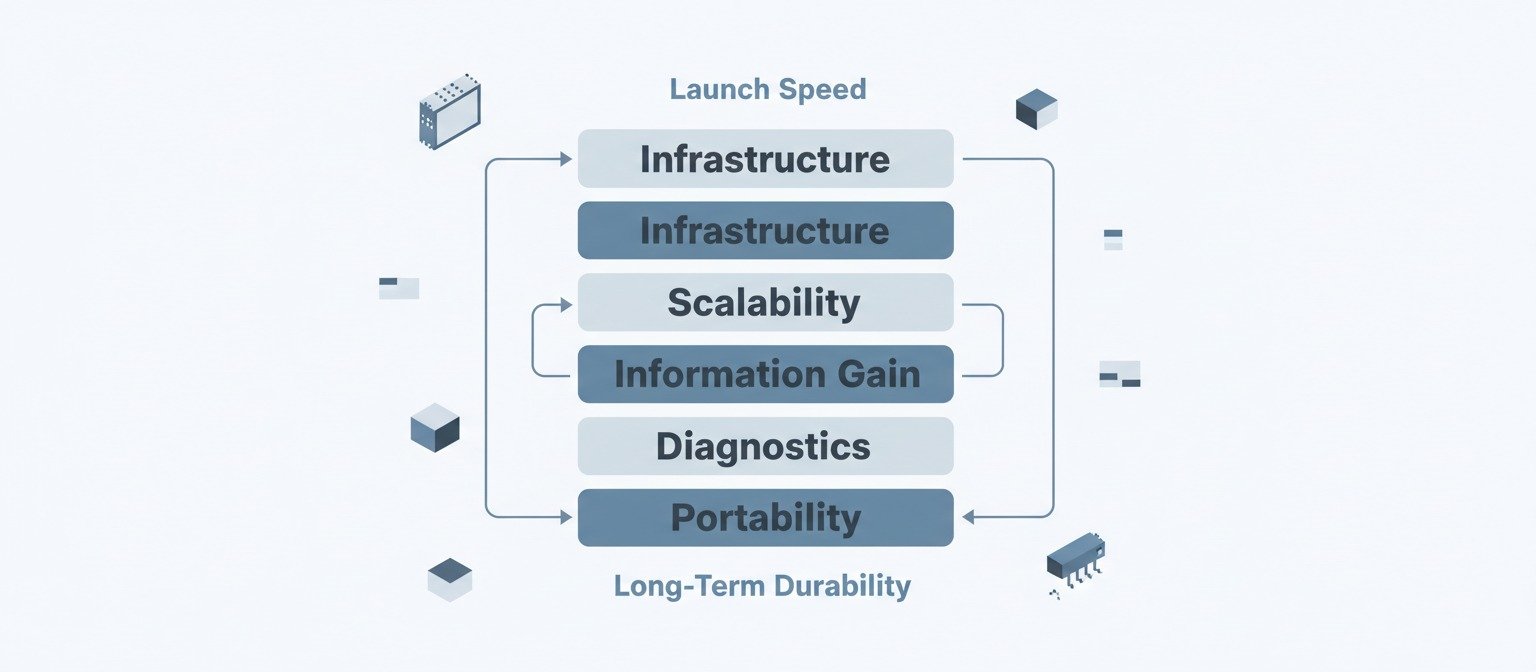

AI website builders can generate a functioning website quickly, but speed should not be the primary decision factor. Before committing, evaluate infrastructure control, scalability limits, SEO flexibility, diagnostic visibility, content ownership, and migration portability. Long-term performance typically depends more on structural adaptability than on launch velocity.

Introduction — Reframing the Speed Narrative

Most public discussions around AI website builders emphasize acceleration. Videos demonstrate websites generated in minutes. Case studies focus on reduced setup time. Promotional material frames AI as a shortcut to launch.

Speed is measurable. Structural durability is not immediately visible.

A website is not only a visual interface. It is a publishing system that interacts with search engines, browser rendering engines, hosting environments, analytics platforms, and users with varying intent. Once deployed, it enters a feedback loop of crawling, indexing, engagement measurement, and ranking volatility.

When evaluating AI website builders, the critical variable is not how quickly a homepage appears. The critical variable is what structural constraints are introduced by the platform itself.

The following sections examine that system-level dimension.

This article is part of a complete system for evaluating AI website builders. For foundational context:

- Start with whether you should trust them at all

- Understand the hidden control risks

- Learn when they actually make sense

- Discover what vendors don’t mention

Watch for signs of overselling

Infrastructure Layer

An AI website builder is not just a design generator. It is an abstraction layer over hosting, rendering, content management, and sometimes database control.

Rendering Architecture

Rendering determines how search engines and users receive content.

Key considerations include:

- Is the site server-side rendered (SSR) or primarily client-side rendered (CSR)?

- Does meaningful content exist in the initial HTML response?

- Is heavy JavaScript hydration required to display core text?

Modern search engines can render JavaScript. However, rendering introduces latency and dependency. If content depends on script execution, indexing reliability may vary depending on implementation and crawl budget allocation.

This variability in indexing reliability is a primary factor in why AI content sites get no-index after publishing, even when the content itself is technically valid.

This does not imply CSR sites cannot rank. It suggests that rendering strategy introduces variables that should be understood before scale.

Markup Control

Generated code can range from clean semantic HTML to deeply nested proprietary structures.

Questions include:

- Can the HTML be exported in readable form?

- Are heading hierarchies editable?

- Can unnecessary wrappers be removed?

Markup control affects accessibility, performance optimization, and structured data integration.

If code cannot be meaningfully modified, optimization flexibility is constrained.

Structured Data Access

Entity salience and contextual clarity often benefit from structured data.

Evaluate:

- Is schema automatically generated?

- Can you manually edit or extend it?

- Can multiple schema types coexist on a page?

If structured data is locked or limited, nuanced entity relationships may be harder to express.

Infrastructure decisions influence how precisely information is communicated to machines.

Scalability Layer

A site that works at small scale may behave differently as content volume grows.

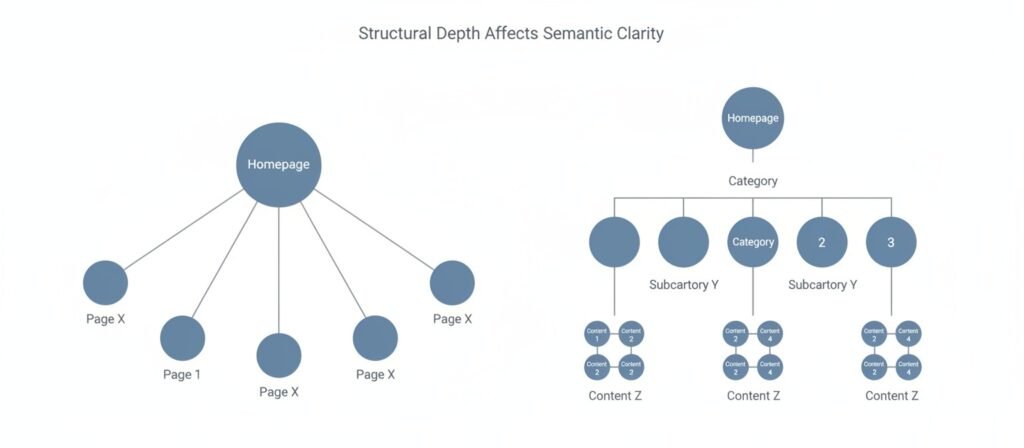

URL Architecture Flexibility

URL structure affects crawl paths, internal linking logic, and semantic hierarchy.

Questions include:

- Can you control directory depth?

- Can category structures be customized?

- Are dynamic parameters introduced automatically?

Rigid URL patterns can limit topical clustering. A flexible architecture allows for clearer information silos.

Internal Linking Control

Internal links distribute authority and establish contextual relationships.

Consider:

- Are links automatically generated?

- Can anchor text be customized?

- Are links restricted to predefined modules?

If internal linking is template-bound or minimally editable, semantic flow may become repetitive rather than strategic.

This lack of strategic linking control directly contributes to the authority dilution patterns documented in AI content cannibalization issues, where multiple pages compete instead of consolidating signals.

Template Duplication Risk

Many AI builders rely on uniform layout systems.

Ask:

- Do all pages share the same structural skeleton?

- Can layouts vary meaningfully between content types?

- Are content blocks modular or fixed?

Structural homogeneity may simplify design consistency but can reduce contextual differentiation between topics.

When combined with automated publishing, this homogeneity explains why autoblogging destroys topical authority—volume increases, but structural distinction does not.

Scalability is not merely page quantity. It is structural adaptability under expansion.

Information Gain Layer

Search systems increasingly attempt to evaluate whether a page provides incremental value beyond existing indexed material.

AI-generated text often reflects patterns already embedded in public datasets. Without additional differentiation, the output may replicate known structures rather than extend them.

This replication without differentiation is a key distinction in our analysis of AI writing vs. content systems—text can be generated, but information systems require structural intent.

Depth Capacity

Evaluate:

- Can you insert detailed tables?

- Are comparison matrices supported?

- Can diagrams and proprietary visuals be embedded without constraint?

If the platform limits layout flexibility, information density may plateau.

Multi-Intent Support

Users often reformulate queries.

A single URL may need to satisfy:

- Informational curiosity

- Risk assessment

- Commercial evaluation

- Technical troubleshooting

If content expansion breaks layout or introduces structural clutter, multi-intent coverage becomes difficult.

Information gain requires both content originality and structural accommodation.

Diagnostic Layer

Every publishing system eventually encounters variability in impressions, rankings, or engagement.

Optimization requires feedback visibility.

Analytics Depth

Questions include:

- Is raw analytics data accessible?

- Can crawl anomalies be detected?

- Are server logs available?

Without log access, diagnosing crawl inefficiencies becomes inferential rather than empirical.

This diagnostic blindness often leads site owners to discover too late that AI blogs get stuck at zero impressions, with no visibility into why crawl and indexation failed.

Canonical and Indexation Control

Evaluate:

- Are canonical tags editable?

- Can indexation directives be configured?

- Are duplicate archives generated automatically?

If indexation logic is automated and opaque, structural issues may persist unnoticed.

Diagnostic limitations increase dependency on platform defaults.

Business & Ethical Layer

Professional forums frequently reveal tension around AI-assisted development.

Value Framing

Is AI positioned as:

- A productivity tool?

- Or the core deliverable?

Clients may care primarily about outcomes. However, structural weaknesses in an AI-generated site can surface later as performance instability or integration limitations.

Competency Boundaries

If the platform generates features beyond the operator’s expertise, maintenance risk increases.

AI can scaffold. It does not replace system-level understanding.

Transparency and operational competence influence long-term client relationships.

E-Commerce Layer

E-commerce introduces dynamic logic beyond static content.

Consider:

- Payment gateway flexibility

- Inventory synchronization

- Tax calculation rules

- Custom checkout flows

Some AI builders integrate e-commerce modules. Complexity escalates when workflows require customization or integration with external systems.

Static generation logic does not always translate smoothly to dynamic transaction systems.

Assess whether the builder’s intended use case aligns with your operational requirements.

Lock-In & Exit Layer

Convenience often correlates with abstraction. Abstraction can increase dependency.

Data Portability

Evaluate:

- Can content be exported in structured database form?

- Are media assets portable?

- Do URLs remain stable after export?

Migration complexity often becomes visible only when strategic pivots occur.

Proprietary Block Systems

If layouts depend on proprietary components, portability decreases.

Infrastructure lock-in reduces optionality. Optionality affects long-term resilience.

Crawl & Indexing Behavior

Search systems allocate crawl resources selectively.

Questions include:

- Are low-value archive pages auto-generated?

- Are tag pages indexable by default?

- Can pagination rules be controlled?

Excessive low-value URLs can dilute authority signals.

Crawl efficiency often correlates with structural clarity.

AI builders that auto-generate multiple structural variants may require manual oversight to prevent indexation noise.

This indexation noise is a structural manifestation of the broader pattern we documented in why automated content doesn’t compound—more pages, but no accumulated authority.

Automation Boundaries

Automation excels at:

- Generating drafts

- Producing layout prototypes

- Filling metadata fields

Automation weakens at:

- Strategic clustering decisions

- Intent mapping across query variations

- Authority consolidation planning

- Diagnosing algorithmic volatility

Understanding where automation ends prevents overreliance.

Each of these boundaries corresponds to a failure pattern we’ve documented: strategic gaps lead to why AI websites fail after launch; intent confusion drives AI content cannibalization issues; and diagnostic limits explain why AI blogs get no traffic despite consistent publishing.

Automation reduces friction. It does not eliminate system constraints.

Decision Matrix

Layer | Core Question | Risk if Ignored | Impact on BUILD | Impact on RANK | Impact on CONVERT |

Infrastructure | Who controls rendering & markup? | Limited flexibility | Faster setup | Crawl variability | Indirect trust impact |

Scalability | Can architecture evolve? | Topic fragmentation | Easy expansion | Authority dilution | Reduced coherence |

Information Gain | Can depth increase structurally? | Redundancy | Quick publishing | Low differentiation | Lower engagement |

Diagnostics | Can issues be traced? | Guess-based optimization | Stable surface | Ranking volatility | Performance instability |

Lock-In | Is migration possible? | Strategic rigidity | Platform dependence | Long-term inflexibility | Business constraint |

This reframes builder selection as a systems analysis decision rather than a tool feature comparison.

Conclusion — Deployment vs Durability

AI website builders compress launch timelines. They reduce technical friction and lower entry barriers.

However, structural durability depends on:

- Infrastructure transparency

- Semantic scalability

- Diagnostic visibility

- Exit flexibility

- Responsible automation boundaries

The decision should reflect the long-term behavior of the system rather than the short-term efficiency of deployment.

Acceleration is visible at launch. Structural constraints often surface later.

Evaluating both dimensions before adoption reduces the likelihood of strategic rigidity.

Frequently Asked Questions

Can AI website builders rank on Google?

They can rank if crawl accessibility, structured data configuration, and internal linking are implemented effectively. The builder itself is not inherently determinative. Structural execution and content differentiation tend to influence visibility more than generation speed.

Are AI website builders good for SEO?

Most platforms include foundational SEO settings. Advanced optimization depends on structural flexibility, including schema control, canonical configuration, and internal linking capabilities.

Can I build an e-commerce site with an AI website builder?

Basic e-commerce features are often supported. More complex workflows may require platforms with greater customization capacity.

Do I own the content created by AI website builders?

Ownership and licensing vary by provider. Reviewing platform terms and export capabilities before publishing is advisable.

Are AI website builders suitable for client work?

They can accelerate scaffolding. Ongoing optimization, maintenance, and strategic oversight remain necessary for complex or long-term projects.

External Resources

- Google Search Central — JavaScript SEO Basics

https://developers.google.com/search/docs/crawling-indexing/javascript/javascript-seo-basics - Google Search Central — SEO Starter Guide

https://developers.google.com/search/docs/fundamentals/seo-starter-guide - Google Search Central — How Search Works

https://developers.google.com/search/docs/fundamentals/how-search-works - B12 — 9 Questions About Content Ownership to Ask AI Website Builders

https://www.b12.io/resource-center/website-basics/9-questions-about-content-ownership-to-ask-ai-website-builders/ - AJ Oberlender — I Asked AI to Build a Website. Here’s What It Got Right (and Wrong)

https://www.ajoberlender.com/blog/i-asked-ai-to-build-a-website

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn