AI Website Builder Risks:

Quick Answer:

AI website builders are not inherently unsafe. The primary risks usually relate to limited backend visibility, constrained control over infrastructure, and portability restrictions. Security features like SSL and payment integrations reduce surface-level risk, but long-term exposure often emerges when scaling, migrating, or diagnosing issues within opaque platform architectures.

Introduction

Most articles about AI website builder risks repeat the same list: security vulnerabilities, bloated code, generic design, vendor lock-in, and SEO problems.

The problem is not that these risks are wrong. The problem is that they are structurally incomplete.

The real risk is rarely “AI wrote bad code.”

It is a loss of control over systems you cannot fully see, audit, or migrate.

This loss of control manifests across every failure pattern we’ve documented—from why AI websites fail after launch to the structural convergence in AI content cannibalization issues.

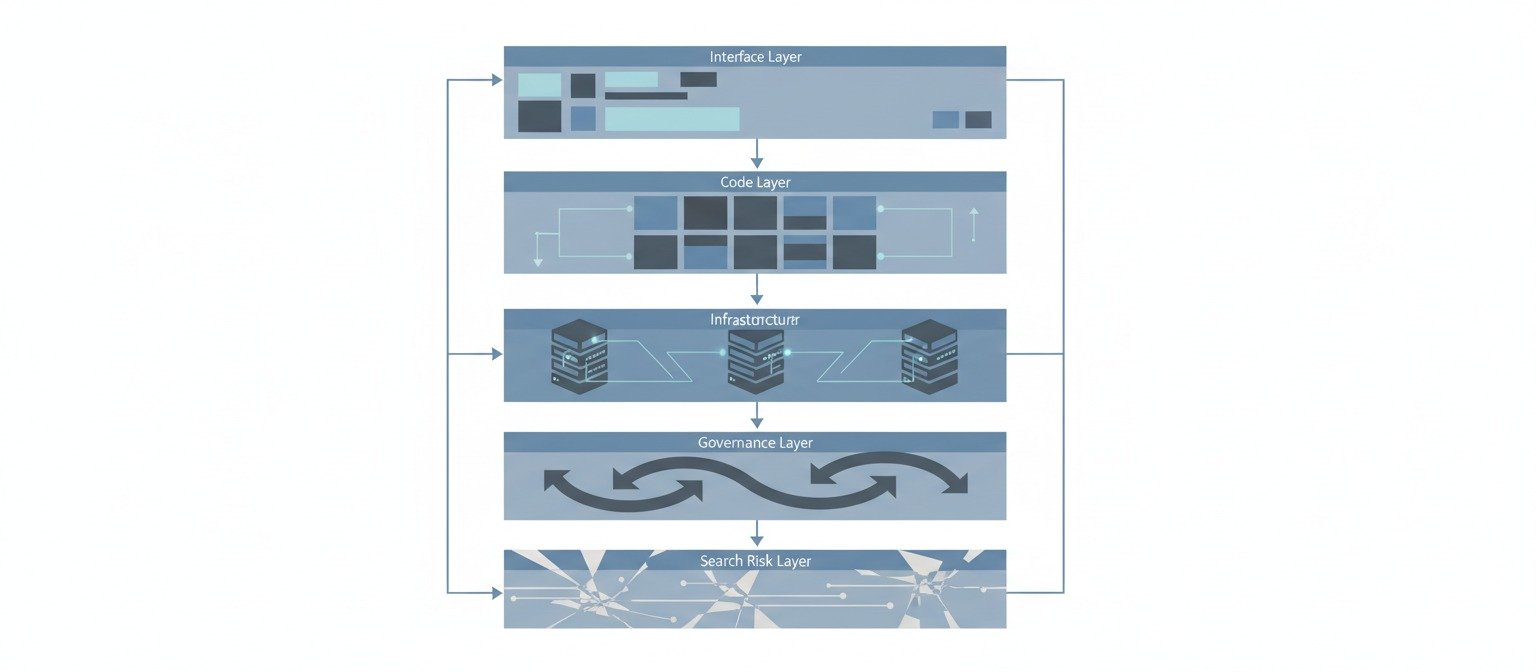

This article does not repeat generic warnings. Instead, it maps AI website builder risk across system layers: interface, code, infrastructure, governance, and search visibility. The goal is clarity—not fear.

Why Most “Risk” Articles Are Redundant

A typical SERP scan shows near-identical structures:

- “AI websites lack creativity.”

- “Security vulnerabilities may exist.”

- “Code can be bloated.”

- “SEO might suffer.”

- “You may face vendor lock-in.”

These are surface-level observations. They describe symptoms, not mechanisms.

What is usually missing:

- A classification of risk layers

- A distinction between frontend generation and backend control

- A framework for verification

- A discussion of ownership boundaries

Without those, the article becomes corpus expansion—not information gain.

This distinction between surface repetition and structural insight is central to our analysis of AI writing vs. content systems—text can expand, but systems require intentional architecture.

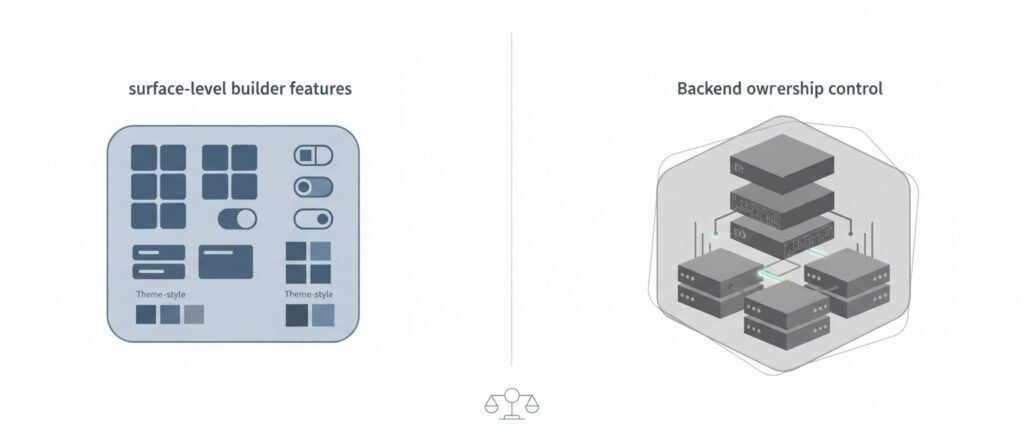

Risk Is Not a Feature Problem. It’s a control problem.

AI website builders combine two layers:

- Interface generation (what AI produces)

- Platform infrastructure (what the vendor controls)

Most risk discussions mix these layers. They should be separated.

Below is a layered model.

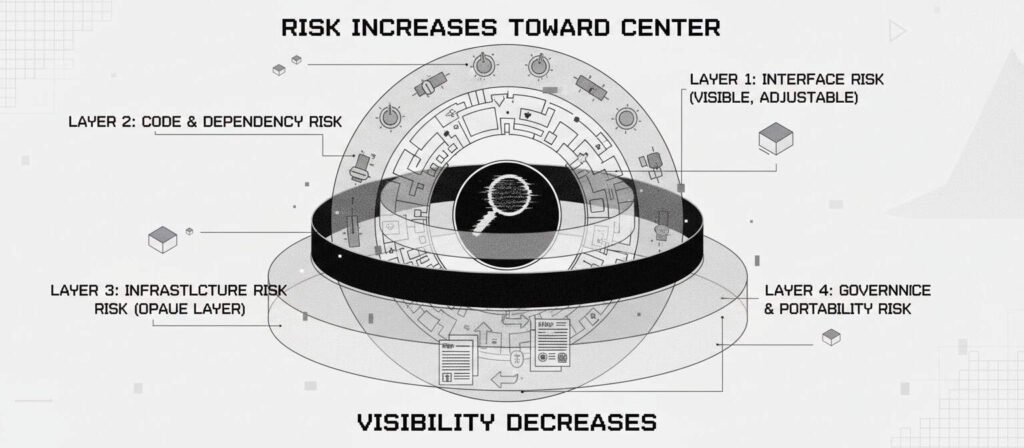

Layer 1—Interface Risk (Surface Layer)

This includes:

- Form handling

- Layout rigidity

- UX constraints

- Template repetition

<en-us-grammar>These risks are visible and usually adjustable.</en-us-grammar>

They rarely cause structural failure. They cause friction.

Layer 2—Code & Dependency Risk

AI-generated code may include:

- Redundant scripts

- Unnecessary dependencies

- Inefficient asset loading

- Generated abstraction layers

However, code quality alone is not the dominant risk factor.

The more relevant question:

Can you audit, edit, or replace the underlying code if needed?

If not, risk accumulates when customization increases.

This accumulation of unchecked risk connects directly to our analysis of what vendors don’t tell you about AI automation—the hidden operational realities that only appear after deployment.

Layer 3—Infrastructure Risk (Opaque Layer)

This is where most meaningful risk exists.

Includes:

- Authentication handling

- Database configuration

- Logging access

- Incident response visibility

- Patch management

- Server-level security

Many AI builders abstract this layer entirely.

Security features (SSL, CDN, HTTPS) reduce transmission risk, but they do not automatically provide:

- Log access

- Role-based permissions control

- Database export visibility

- Infrastructure configuration auditability

The absence of visibility is not necessarily insecurity.

But it reduces independent verification.

Without verification capability, site owners often discover too late that AI blogs get stuck at zero impressions—not because content is poor, but because infrastructure-level crawl and indexation issues remain invisible and unaddressed.

Layer 4—Governance & Portability Risk

This is rarely discussed clearly.

Questions that matter:

- Can you export the full site structure?

- Can you export database data in standard format?

- Can you transfer hosting independently?

- Are redirects configurable during migration?

- Does the platform restrict backend logic deployment?

AI builders often optimize for simplicity, not portability.

Portability becomes critical when:

- Scaling complexity increases

- Compliance requirements change

- Replatforming becomes necessary

Vendor lock-in is not immediate. It is cumulative.

Before committing to any platform, work through our questions to ask before using AI website builders. These questions help surface portability limits before they become problems.

Layer 5—Search & Visibility Risk

SEO risk is frequently overstated.

Modern AI builders often include:

- SSL

- Mobile responsiveness

- Basic structured data

- Page speed optimizations

However, structural visibility risks may arise when:

- Crawl surfaces are rigid

- Custom schema implementation is restricted

- Internal linking flexibility is limited

- Canonical control is constrained

- Redirect logic is limited

Search risk is rarely about “AI content.”

It is about architectural constraint.

Architectural constraint directly drives the patterns we documented in why automated content doesn’t compound—pages accumulate, but authority cannot consolidate within a constrained structure.

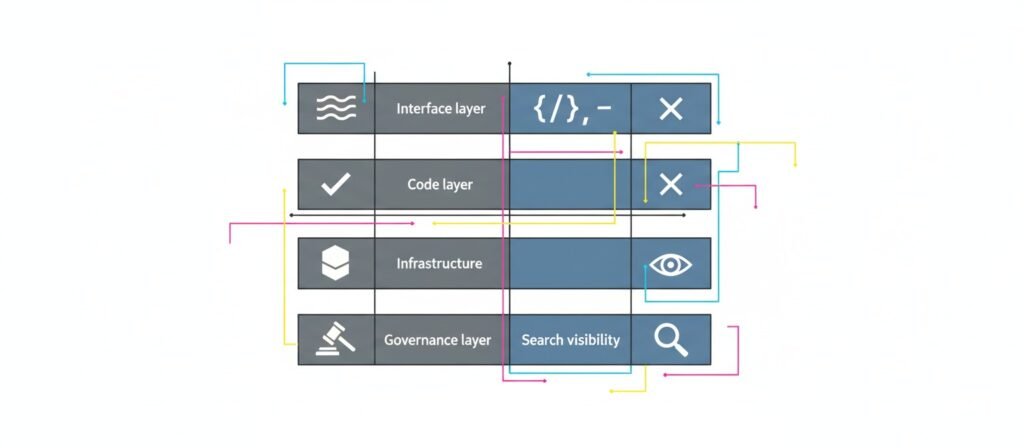

What You Can Actually Verify

Instead of asking, “Is it secure?”, a better question is:

What can I independently verify?

Below is a verification matrix.

Risk Layer | What You Can See | What You May Not See | Trust Signal |

Interface | Form validation, UX | Backend sanitization | Input validation options |

Code | Page source | Server logic | Exportable code access |

Infrastructure | SSL, CDN | Logging, patching | Security documentation |

Governance | Domain access | Data portability limits | Export formats |

Search | Sitemap, robots | Crawl budget control | Technical customization scope |

Security without verification is vendor trust. Security with verification is system confidence.

This verification approach is essential because, as we explain in “Signs an AI Platform Is Overselling,” the gap between what you can see and what you can’t is often where problems emerge.

Vendor Features vs User Ownership

Platforms often highlight:

- Automatic SSL

- CDN protection

- Secure payment gateways

- CAPTCHA integration

- Auth integrations

These features reduce certain risks.

But features do not equal ownership.

Example:

- SSL protects transport.

- It does not provide database audit control.

- Payment integration secures transactions.

- It does not guarantee exportable order data structures.

Ownership clarity matters more than feature count.

When AI Website Builders Are Structurally Safe

AI builders are generally well-suited for:

- Static brochure websites

- Low-complexity service pages

- Landing pages

- Early-stage MVPs

- Content-first projects without heavy backend logic

Risk increases when:

- Custom workflows are required

- Multi-role access systems are introduced

- Regulatory compliance becomes strict

- Migration becomes necessary

- High-traffic optimization is required

The issue is not AI. It is bounded complexity.

For a complete picture of appropriate use cases, see our guide on when AI website builders actually make sense. The bounded complexity described there aligns with the risk assessment above.

The Long-Term Risk: Opacity Under Change

Most failures do not appear at launch.

They appear during:

- Feature expansion

- Backend customization

- Data migration

- Incident response

- Performance debugging

- Platform transition

Opaque systems behave well under stability. They become fragile under change. This is the real structural risk layer.

Understanding these risks fundamentally reframes whether you should trust AI website builders at all. Trust, as we’ve shown, depends on control visibility—not feature lists.

Frequently Asked Questions:

Are AI website builders secure enough for business use?

They can be secure for standard use cases. Risk increases when businesses require backend control, audit visibility, advanced customization, or regulatory compliance. Security depends on infrastructure transparency and governance clarity, not just frontend features.

Do AI website builders harm SEO?

Not inherently. Many include modern performance optimizations. SEO limitations usually arise when structural customization, schema flexibility, redirect management, or crawl control are constrained.

Is vendor lock-in unavoidable?

Not always. It depends on export options, database access, hosting independence, and migration flexibility. Lock-in risk increases when platform architecture is tightly coupled and non-exportable.

Is AI-generated code inherently unsafe?

No. Code risk is often secondary to infrastructure governance and patch management. Generated code becomes problematic when it cannot be audited or replaced.

Should developers avoid AI website builders entirely?

Not necessarily. They are effective within bounded complexity environments. The risk lies in scaling beyond their architectural intent.

Conclusion

The dominant narrative around AI website builders focuses on surface-level risk lists. The structural risk is not automation. It is control asymmetry.

When visibility, portability, and governance clarity are preserved, AI builders can operate safely within their intended boundaries.

When opacity increases under scaling, risk accumulates. Risk modeling should focus on ownership and verification, not just feature presence.

External References & Further Reading

- OWASP—Top 10 Web Application Security Risks

https://owasp.org/www-project-top-ten/ - OWASP—Secure Design Principles

https://owasp.org/www-project-secure-design-principles/ - NIST Digital Identity Guidelines (SP 800-63)

https://pages.nist.gov/800-63-3/ - Cloudflare—What is SSL/TLS?

https://www.cloudflare.com/learning/ssl/what-is-ssl/ - Stripe—Security at Stripe

https://stripe.com/docs/security - CIO—What Is Vendor Lock-In?

https://www.cio.com/article/272368/what-is-vendor-lock-in.html

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn