Why Autoblogging Destroys Topical Authority

Quick Answer:

Autoblogging destroys topical authority when publishing speed is disconnected from intent control, reinforcement between pages, and feedback interpretation. Automation increases output, but without systems that prevent overlap, consolidate meaning, and react to performance signals, authority fragments over time instead of compounding.

Introduction:

Autoblogging can publish a lot of content very quickly. At first, this feels productive. Pages go live, indexing starts, and impressions may appear. But over time, many autoblogged sites lose topical authority. Rankings flatten, visibility becomes unstable, and the site stops being treated as a reliable source.

This article explains why autoblogging destroys topical authority at a system level. The issue is not AI itself. It is how automated publishing interacts with intent, reinforcement, and feedback across a site.

What topical authority means in practice

Topical authority is not a score on a single page. It is a site level pattern.

In practice, a site has topical authority when search systems can reliably infer that:

- The site focuses on a narrow subject area.

- Pages support each other instead of competing.

- New content strengthens existing understanding rather than diluting it.

- The site behaves consistently over time.

Search systems such as Google evaluate these signals across many pages, not in isolation. That is why sites can publish dozens of decent articles and still fail to be treated as authoritative.

What autoblogging actually is

Autoblogging refers to automated systems that:

- Generate content using AI or scripts.

- Publish on a fixed schedule or trigger.

- Minimize human review and editorial control.

- Optimize for output consistency rather than editorial decisions.

This approach is often framed as efficiency. The problem is that efficiency in publishing is not the same as efficiency in meaning creation.

Why autoblogging destroys topical authority over time

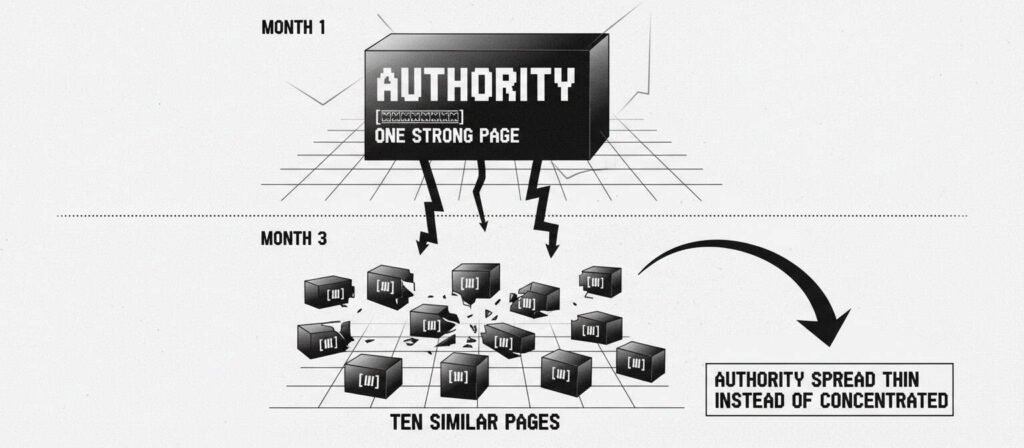

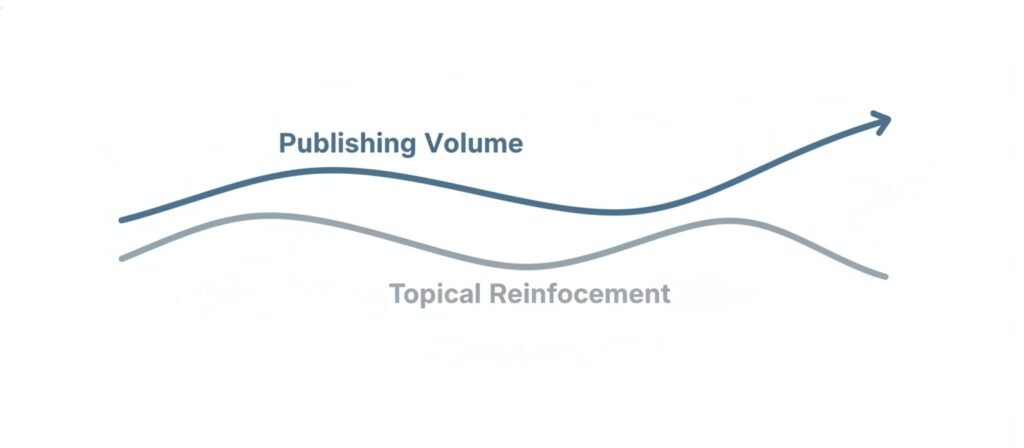

1. Volume expands faster than reinforcement

Topical authority grows when new pages reinforce a small number of core ideas repeatedly. Autoblogging expands coverage faster than reinforcement can occur.

What happens in practice:

- New topics are added continuously.

- Existing pages receive little contextual strengthening.

- The site becomes wide instead of deep.

Search systems then see a collection of topics rather than a coherent domain of expertise.

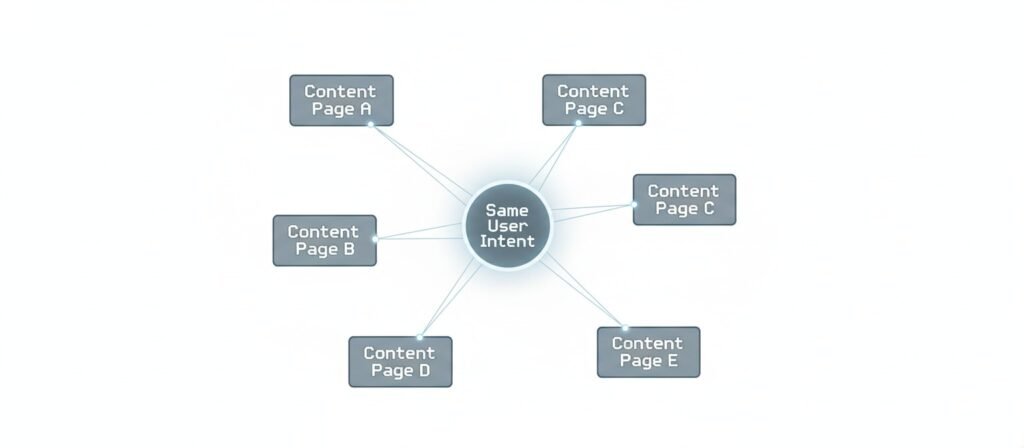

2. Intent overlap accumulates quietly

One of the most common failure patterns in autoblogging is intent overlap.

Automated systems often generate:

- Multiple pages answering the same question in slightly different wording.

- Pages that target adjacent queries with identical intent.

- Time-based variants that still satisfy the same user need.

This creates internal competition. Instead of one clear answer, the site offers many weak alternatives.

You can see how this leads to early stagnation in pages that are indexed but never tested in search results, a pattern discussed in why AI blogs get stuck at zero impressions.

3. Topical focus drifts without boundaries

Human editors naturally say no to content that does not fit a site’s scope. Autoblogging systems rarely do.

Over time:

- Topics creep outward.

- Subtopics are added without hierarchy.

- The original focus becomes harder to infer.

This drift weakens classification signals. Search systems struggle to understand what the site is meant to represent.

A similar pattern appears in sites that look complete but never gain stable rankings, which is explored in Why AI Websites Fail After Launch.

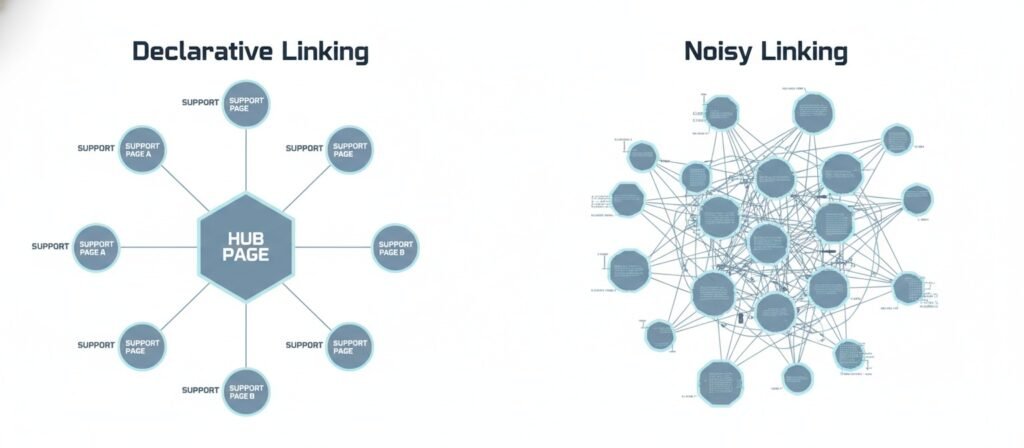

4. Internal linking becomes noisy instead of declarative

Topical authority depends on clear relationships between pages. Autoblogging often produces:

- Sparse internal links.

- Random related post blocks.

- Links that do not reflect intent hierarchy.

Instead of declaring which pages matter most, the site presents a flat web of loosely connected content. This reduces the ability of search systems to assign authority within the site.

The structural difference between writing content and designing a content system is explained in AI writing vs. content systems.

5. Feedback signals are not interpreted

Search performance signals exist, but autoblogging systems usually treat them as reports, not inputs.

Common outcomes:

- Pages with no impressions remain published indefinitely.

- Weak topics continue to receive new content.

- Declining sections are not corrected or consolidated.

Without interpretation, automation scales mistakes. This feedback blindness is a core reason why authority decays silently, a concept expanded in AI content feedback loop SEO.

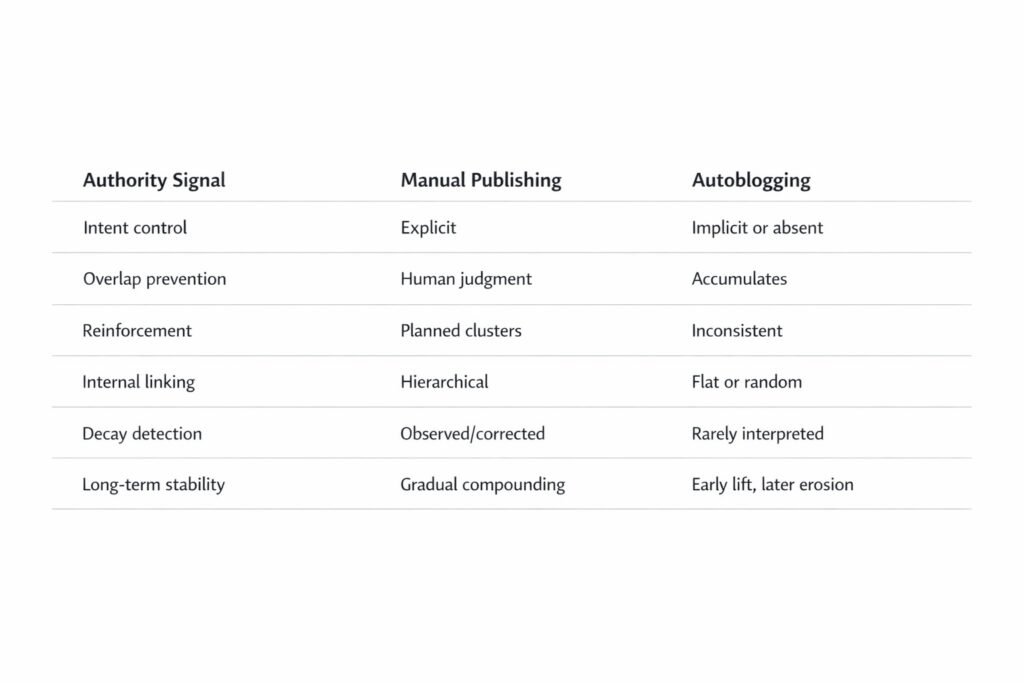

Manual publishing vs autoblogging at the authority level

Authority signal | Manual publishing | Autoblogging |

Intent control | Explicit editorial decisions | Implicit or absent |

Overlap prevention | Human judgment | Accumulates unless engineered |

Reinforcement | Planned clusters | Inconsistent |

Internal linking | Hierarchical | Often flat or random |

Decay detection | Observed and corrected | Rarely interpreted |

Long-term stability | Gradual compounding | Early lift, later erosion |

This table highlights why autoblogging often looks successful early and unstable later.

Why some autopilot experiments appear to work

You may have seen examples where automated sites show growth. These cases usually include constraints that are not visible on the surface:

- Strong topic boundaries.

- Aggressive overlap prevention.

- Delayed publishing.

- Manual intervention when signals degrade.

In other words, the system is no longer pure autoblogging. Authority does not collapse because governance exists, not because automation is harmless.

What this page does not cover

This article intentionally does not cover:

- Autoblogging tools or platforms.

- How to set up AI workflows.

- Prompt engineering.

- Keyword research tactics.

- Fixes or recovery steps.

Those topics belong to implementation and decision stages. This page isolates the mechanism so it does not blur with solutions.

How this connects to related failure patterns

If your site shows related symptoms, these analyses provide deeper context:

- Pages published but never indexed in AI content sites getting no index after publishing.

- Ranking volatility after initial success in why AI websites stop ranking.

Each of these failures is linked by the same underlying issue. Automation without authority governance.

The core implication

Autoblogging does not fail because it uses AI. It fails because it treats publishing as the goal. Topical authority emerges when automation is constrained by intent, reinforcement, and feedback interpretation. Without those constraints, autoblogging systematically erodes the very signals it depends on.

Autoblogging destroys topical authority when output grows faster than understanding. Automation can accelerate publishing, but authority only compounds when systems are designed to preserve meaning, not just volume.

That is why autoblogging destroys topical authority over time.

Frequently asked questions

What does “topical authority” mean?

Topical authority describes how consistently a site demonstrates depth, focus, and reliability within a specific subject area. It reflects how well pages support each other and reinforce a clear domain of expertise.

What is the difference between domain authority and topical authority?

Domain authority is a broad reputation signal often associated with a site’s overall strength. Topical authority is narrower. It reflects how strongly a site is associated with a specific topic through content structure, intent alignment, and reinforcement.

Are blogs authoritative sources?

Blogs can be authoritative if they demonstrate consistent focus, strong internal relationships, and reliable explanations over time. A blog format alone does not determine authority. System design does.

What is a disadvantage of a blog?

A common disadvantage is uncontrolled expansion. Without strict boundaries and reinforcement, blogs often accumulate overlapping content that weakens topical clarity instead of strengthening it.

Sources & Methodology

This analysis is based on a combination of documented search system behavior and observed patterns across automated publishing setups.

Primary reference points include:

Public documentation from Google Search Central describing site-wide content evaluation, indexing behavior, and quality systems.

Reference:

Creating helpful, reliable, people-first contentGoogle Search Quality Rater Guidelines, which outline how topical consistency, redundancy, and purpose clarity are assessed at scale.

Reference (PDF):

Search Quality Rater Guidelines:

These sources are supplemented by pattern analysis from:

Community case discussions in technical forums where automated publishing systems are tested over time, including large-scale automation experiments shared by practitioners.

Example reference:

Reddit discussions on automated content systemsPost-launch failure reports from sites using autoblogging and AI content pipelines, where pages are indexed but show limited or no sustained visibility.

Supporting context:

Why pages may be indexed but not surfacedComparative review of search result pages where automated content appears widely published yet rarely tested or retained in rankings.

Supporting explanation:

How Google evaluates and tests search results

The conclusions here do not rely on single experiments, tools, or anecdotal wins. They describe recurring system-level behaviors observed when publishing volume grows faster than intent control, reinforcement, and feedback interpretation.

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn