How AI Content Automation Actually Works

Quick Answer

AI content automation works when it produces not only content, but a system that search engines can test and reinforce. It combines content creation, internal structure, eligibility for visibility, user response signals, and ongoing correction. Automation succeeds when these parts work together, not when pages are only published in bulk.

INTRODUCTION:

Most of what you see online talks about tools, shortcuts, or repurposing content from one format to another. That does not explain how automated content actually earns visibility on Google. In this article we will focus on what search systems care about, why some automated content gets visibility, and why most does not.

We will also refer to real patterns you might already be familiar with, such as pages that never get indexed, pages that remain invisible, and pages that get no traffic despite being published.

What Most People Mean by AI Content Automation

When someone hears “AI content automation,” they often picture software doing three things:

- Generating text using a language model

- Scheduling that text for publication

- Posting it across platforms automatically

These steps are real. They are part of many marketing workflows. They help teams save time when producing drafts, batch writing, or repurposing material. Tools like Make, Zapier, or built-in schedulers in apps automate actions so you do not have to click a button each time.

But this view focuses on actions. It does not include why some content suddenly gets impressions, or how search systems decide to test, rank, and reinforce pages.

When you publish content without understanding how search systems work, you get obvious problems. For example:

- Sites that never get indexed after publishing

- Blogs that stay stuck at zero impressions even when indexed

- Pages that do not gain traffic over time

We have written about each of these problems in detail. You can explore them here:

- Why AI Content Sites Getting No Index After Publishing

- Why AI Blogs Get Stuck at Zero Impressions

- Why AI Blogs Get No Traffic

- Why AI Websites Fail After Launch

Looking at these patterns helps us understand where automation tends to fail.

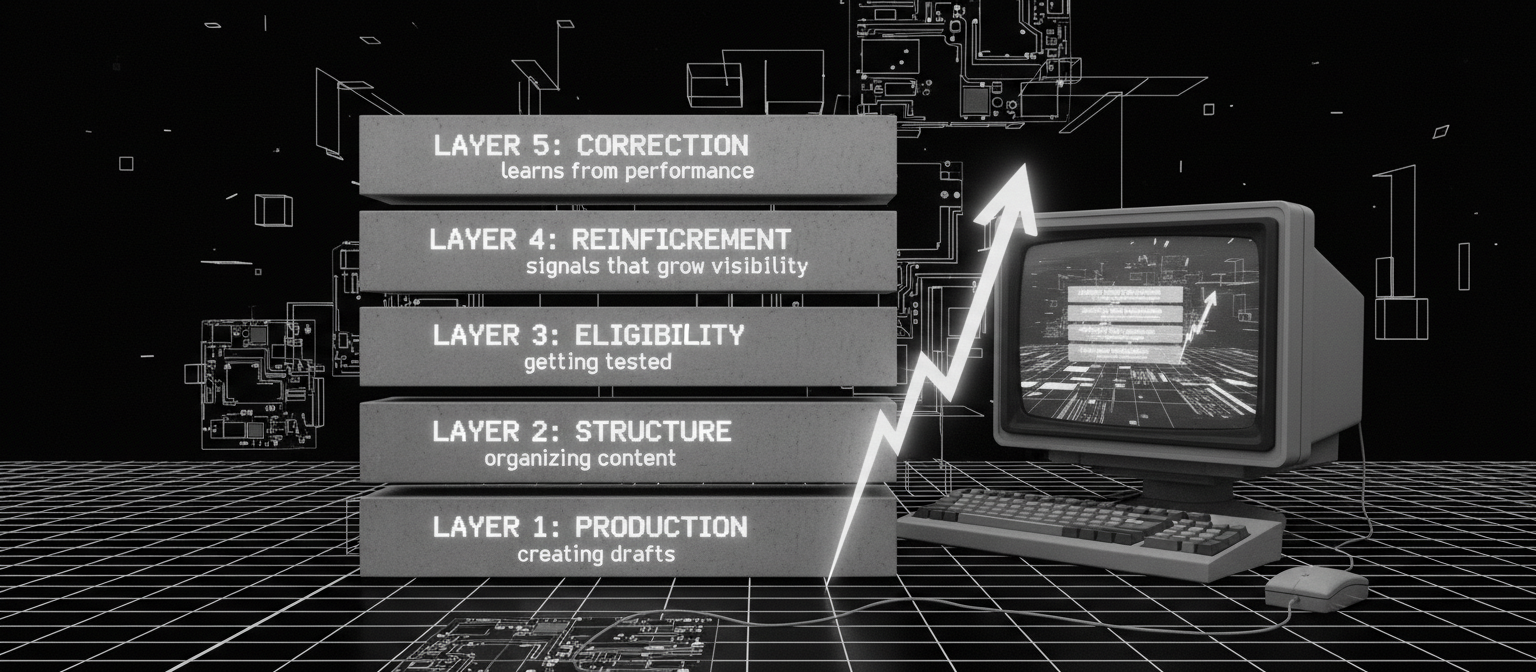

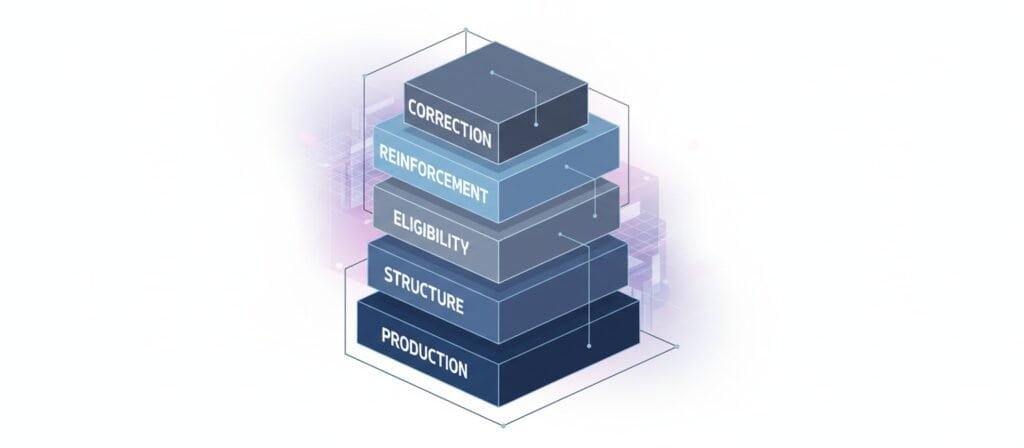

The Five Layers of AI Content Automation

To see how automation can actually work, we need to break down the process into five layers. These layers form a system. Each one matters in how content is eventually rewarded by search.

Layer | What It Does | Why It Matters |

Production | Creating content drafts | This is where writing happens |

Structure | Organizing content within the site | Helps search understand topic focus |

Eligibility | Making content discoverable and testable | Search systems decide to rank pages |

Reinforcement | Signals that keep the page in the system | Determines if content grows in visibility |

Correction | Learning and adjusting based on performance | Helps the system improve over time |

Most automation pipelines stop at production. They generate content fast but do not guarantee that content ever gets evaluated by search systems.

Real automation involves all five layers working together.

Production: Creating Content

Production is the part people see first. This is where AI models generate text. A language model can write drafts, summaries, or entire articles when given a prompt that specifies tone, topic, and intent.

Production is useful. It saves time. It reduces repetitive writing tasks. But on its own it does not ensure that content will be useful to search engines or humans. Search systems do not reward content just because it exists.

So production is necessary, but not sufficient.

Structure: Organizing Content

Once content is created, it needs structure. Structure helps search engines understand:

- What the topic is

- How the page relates to other pages on the site

- Where the main signals of relevance come from

A good structural system includes:

- Clear internal links to authority pages

- Logical topic clusters

- Defined roles for each page (such as diagnostic, authority, or supporting pages)

Without structure, content behaves like loosely scattered data. It may get indexed, but it may not be given priority for testing or ranking.

This is where many automated systems fail. They produce content, but they do not assign roles or create topical organization.

Eligibility: Getting Into the Search System

After content is structured, it needs to enter the search system’s testing phase. This is what often goes unnoticed. Search engines do not rank everything they index immediately. They decide which pages to test first.

Testing depends on several signals, including:

- Topical focus of the site

- Internal links from authoritative pages

- Historical performance of similar pages

- Signals that demonstrate relevance and quality

If a page lacks these signals, it may remain in “index limbo.” It exists in the search index, but it is not given impressions or tested for visibility. This is a common problem for automation systems that publish many pages without building a strong base.

This behavior is explained in detail in our article on zero impressions: Why AI Blogs Get Stuck at Zero Impressions

Reinforcement: Signals That Keep Content Growing

Once a page is tested and gets impressions, the next question is whether it continues to grow in visibility. This depends on reinforcement signals.

Reinforcement includes:

- Click-through rate (CTR) over time

- Dwell time (how long people stay on the page)

- User interactions

- Freshness signals from updated internal links

When a page shows positive behavioral signals, search systems give it more exposure. When it fails to show these signals, visibility often stalls or declines.

Automated systems that fail to manage reinforcement produce content that does not gain traction. They may increase output, but they do not improve performance.

Our article on traffic explores this: Why AI Blogs Get No Traffic

Correction: Learning From Performance

The final layer is correction. This is part of a feedback loop. A good automated system does not just publish and forget. It:

- Reviews which pages are gaining impressions

- Identifies patterns of success or failure

- Adjusts internal links and content structure

- Rewrites or improves underperforming pages

Automation that includes this feedback step stands a better chance of producing content that performs over time.

Why Tools Alone Do Not Make Automation Work

Describing AI content automation as “tool chains” is common. Articles often talk about connecting a language model to a scheduler or repurposing engine. That is part of the pipeline, but it is only the first layer.

Tools do not guarantee visibility. They help with production and scheduling, but they do not replace strategic structure, eligibility design, reinforcement signals, or correction systems.

In our experience, systems that only automate production produce output that feels busy but does not get results. Search systems are not impressed by volume without structure

Case in Point: Learning From Common Failures

Here are real patterns we have observed:

- A site gets indexed but never appears in search results.

See: Why AI Content Sites Getting No Index After Publishing - A blog has many posts but no impressions.

See: Why AI Blogs Get Stuck at Zero Impressions - A site receives impressions but no clicks or traffic.

See: Why AI Blogs Get No Traffic

Each of these issues points back to a missing part of the five-layer model.

Frequently Asked Questions

How does AI automation work?

AI automation works by combining software rules with machine learning models. The system takes an input such as a topic or trigger, generates content, organizes it, and publishes it automatically. In search, automation only works well when it also builds structure and sends clear relevance signals, not just when it produces text.

What is the 30% rule in AI?

The 30% rule is a common guideline that suggests humans should review, edit, or guide at least part of AI output instead of publishing it fully unchanged. The idea is to keep quality, accuracy, and intent under control so automated content does not become generic or misleading.

How does AI-generated content work?

AI-generated content is created by language models that predict words based on patterns in data. They do not think or understand topics like humans. They generate text based on probabilities. This means the system needs clear prompts, structure, and review so the output matches the topic and purpose of the page.

Can I legally publish a book written by AI?

In many countries, you can publish a book written with AI, but you are responsible for the content. Laws differ by region, and copyright rules are still evolving. Most platforms treat the human who publishes the work as responsible for accuracy, originality, and legal compliance.

Is AI content safe for Google?

AI content can be safe for Google if it is useful, original in purpose, and part of a well-structured site. Problems happen when automation produces large volumes of similar pages with no clear topic focus or user value. Search systems evaluate usefulness and structure, not just how the text was created.

Summary: What Makes Automation Actually Work

To build an automation system that works for search, you need:

- Production that creates high quality drafts

- Structure that organizes content logically across the site

- Eligibility that gets pages into the testing phase

- Reinforcement that helps content grow visibility

- Correction that learns from performance and improves

If your automation skips any of these layers, you are more likely to see pages that fail, stagnate, or fade. Automation is not about writing fast. It is about designing a system that content can live inside. When you approach content this way, automated publishing becomes a partner in growth, not just a button to press.

References and Further Reading

- Google Search Central – Creating helpful content

- Google Search Central – Crawling and indexing overview

- Google Search—How Search Works (organizing information)

- Stanford IR Book – web crawling and indexing (academic overview)

- OpenAI GPT-3 research paper (arXiv)

- U.S. Copyright Office – Copyright and Artificial Intelligence

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn