AI website builders promise something simple. Type a prompt. Get a website. Publish in minutes. For many people, that feels like certainty. The site is live. It looks clean. It has pages, images, and buttons. From the outside, it appears complete.

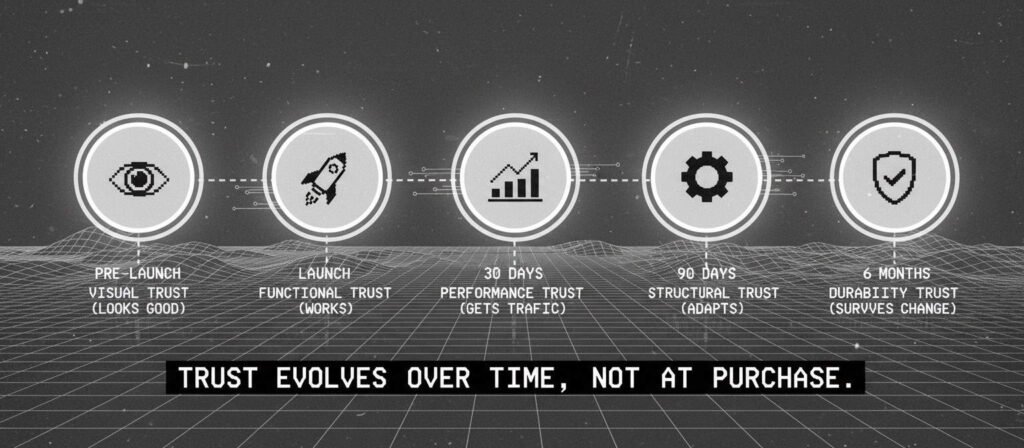

The real question is not whether AI website builders can generate a site. They clearly can. The real question is whether they can be trusted as long-term website systems.

Trust in this context is not about design taste. It is about reliability, control, and durability over time. This article examines that question from a system perspective, not from a product review angle.

Quick Answer

AI website builders are generally trustworthy for:

- Simple brochure websites

- Early-stage business presence

- Landing pages and prototypes

- Short-term campaigns

They become less predictable when:

- SEO performance is critical

- Complex integrations are required

- Portability and migration matter

- Long-term structural control is needed

Trust depends on what the website must handle over time, not on how quickly it was built.

This aligns with our analysis of why AI websites fail after launch when structural controls are missing

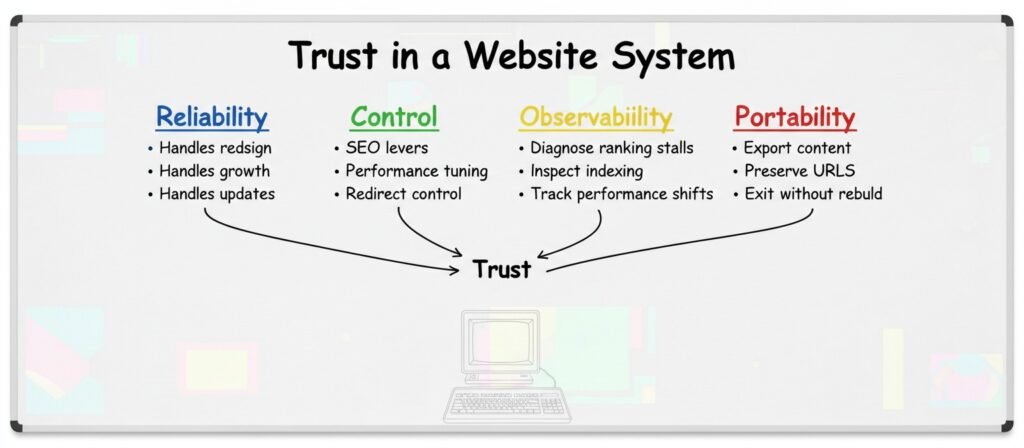

What Trust Actually Means in a Website System

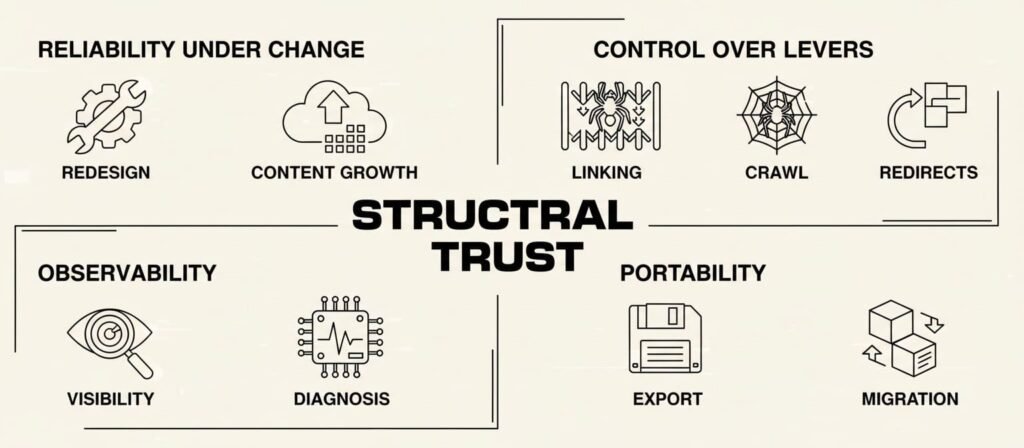

In most online discussions, trust is reduced to security or visual quality. That is incomplete. In practice, trust in a website system includes four structural properties.

1. Reliability Under Change

Websites rarely stay static. Businesses pivot. Content expands. Branding evolves.

A trustworthy system tolerates:

- Redesigns

- Content growth

- Feature additions

- SEO restructuring

If small changes create instability, the system is fragile.

2. Control Over Critical Levers

Search visibility depends on more than meta tags. It involves:

- Internal linking architecture

- Crawl behavior

- Redirect control

- Structured data

- Performance optimization

- Page speed tuning

When these controls are abstracted behind limited interfaces, flexibility narrows.

Limited control over internal linking and crawl behavior often leads to the indexing failures documented in AI content sites getting no-index after publishing.

3. Observability

When traffic stalls, questions arise:

- Are pages indexed

- Are they crawled efficiently

- Are internal links distributing authority

- Is performance limiting visibility

A trustworthy system allows inspection and diagnosis. Without visibility into behavior, improvement becomes guesswork.

This guesswork explains why many site owners discover too late that AI blogs get stuck at zero impressions despite consistent publishing.

4. Portability

Exit cost is often ignored.

If a site must migrate from one platform to another, consider:

- Can content be exported cleanly

- Can URL structures be preserved

- Are redirects manageable

- Is backend logic transferable

Portability is a hidden layer of trust.

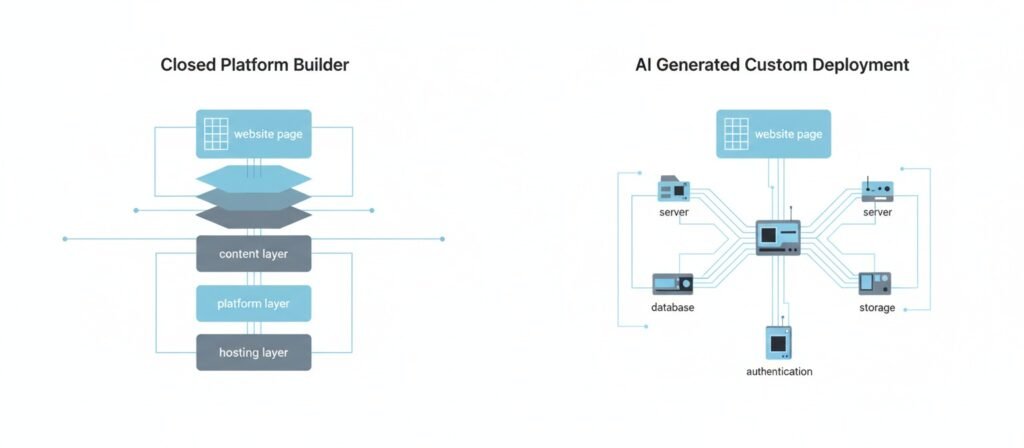

Two Types of AI Website Builders

Search results often treat all AI website builders as identical. They are not.

Category 1: Closed Platform AI Builders

Platforms such as Wix, Hostinger, and Squarespace use AI to generate layouts within controlled ecosystems.

Characteristics:

- Managed hosting

- Automatic SSL

- Built-in security updates

- Structured templates

These platforms reduce misconfiguration risk because backend infrastructure is controlled.

However, deep customization is often limited.

Category 2: AI-Generated Code and Custom Deployments

Other tools generate custom site code or app logic. Some workflows rely on cloud environments such as Amazon Web Services.

Characteristics:

- Higher flexibility

- Greater responsibility

- Deployment complexity

- Maintenance burden

Security issues in these environments often stem from configuration errors, not from AI generation alone. Publicly exposed storage buckets and unpatched servers are common examples discussed in cybersecurity communities.

The trust profile here depends heavily on technical oversight.

These two categories have very different risk profiles—explored in depth in our analysis of AI website builder risks. Understanding which category your platform falls into is the first step in evaluating structural control.

Why Trust Breaks After Launch

From observation and case analysis across builder platforms, several recurring patterns appear.

The Finished Illusion

AI builders generate visually complete pages. This creates psychological closure. The site feels done.

Yet structural layers such as

- Information hierarchy

- Internal link strategy

- Content differentiation

may remain shallow.

Appearance can mask system thinness.

Black Box SEO

Many AI website builders advertise SEO tools.

In practice, SEO performance depends on structural elements:

Structural Layer | Visible in UI | Deeply Controllable |

Meta Tags | Yes | Often limited |

Sitemap | Yes | Usually automated |

Internal Links | Partially | Often constrained |

Schema Markup | Sometimes | Limited flexibility |

Crawl Budget | Rarely | Mostly hidden |

When ranking stalls, limited visibility into these layers restricts diagnosis.

This limited visibility makes it critical to ask the right questions before committing—questions we’ve compiled in our complete evaluation framework.

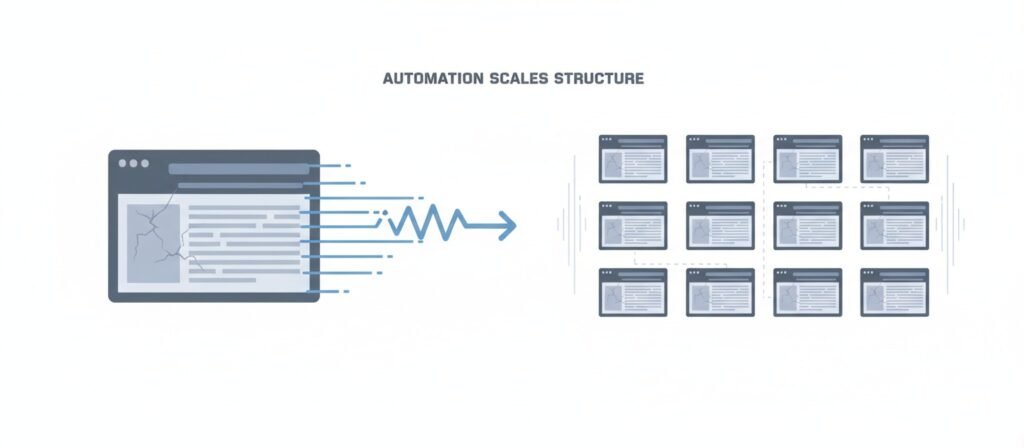

Automation Amplifies Defaults

Automation is neutral. It scales whatever foundation exists.

If a default template uses

- Generic layout structures

- Repeated content patterns

- Similar semantic clusters

Then large portfolios can converge structurally.

This pattern appears frequently in AI-generated site ecosystems. Pages exist. Authority does not consolidate.

This pattern of scale without consolidation mirrors what happens when autoblogging destroys topical authority—volume increases, but structural integrity does not.

Workflow Comparison

Below is a simplified structural comparison.

AI Builder Workflow

Idea

↓

Prompt

↓

Generated Site

↓

Publish

System-Driven Workflow

Intent Definition

↓

Information Architecture

↓

Content Mapping

↓

Technical Validation

↓

Publish

↓

Performance Feedback

↓

Structural Adjustment

The difference is not speed. It is governance and feedback.

Without feedback loops, systems cannot self-correct—a dynamic explored in depth in our analysis of the AI content feedback loop in SEO.

Security Considerations

Questions about AI website security often appear in forums.

Security depends on infrastructure.

Closed builders generally provide:

- HTTPS by default

- Managed certificates

- Automatic updates

Custom AI deployments require:

- Patch management

- Database security

- Access control configuration

Research from cybersecurity discussions suggests most website breaches stem from:

- Unpatched systems

- Misconfigured databases

- Exposed storage resources

The AI layer rarely introduces unique security flaws by itself. Risk typically emerges from deployment practices.

When Trusting AI Website Builders Makes Sense

Trust is context-dependent.

AI builders are often appropriate for:

- Local businesses with basic needs

- Early-stage founders validating ideas

- Event landing pages

- Portfolio sites

- Budget-constrained launches

In these cases, speed and simplicity align with objectives.

When Caution Is Rational

Higher scrutiny is reasonable when:

- Organic search is the primary acquisition channel

- The business depends on structured content ecosystems

- Advanced integrations are required

- Migration flexibility matters

In these contexts, control and observability become critical.

A Practical Trust Lens

Instead of asking whether AI website builders are good or bad, ask:

- What happens if rankings stagnate

- Can URL structures be fully controlled

- Who manages infrastructure updates

- What is the exit cost

These questions clarify structural fit.

For a deeper look at when these platforms are actually appropriate—and when they’re not—see our guide on when AI website builders actually make sense. And perhaps most importantly: what are the signs that a platform is overselling its capabilities? This question often reveals whether vendors understand their own limitations.

Frequently Asked Questions

Are AI website builders good for SEO?

They provide foundational SEO settings. Long-term performance depends on:

- Internal architecture flexibility

- Structured data control

- Technical performance limits

- Content differentiation strategy

Outcomes vary by platform and implementation.

Are AI website builders secure?

Closed platforms are generally secure for standard business sites. Security responsibility increases in custom deployment environments. Infrastructure governance matters more than AI generation.

Can AI replace web developers?

AI reduces friction for simple sites. Complex systems involving databases, integrations, and scaling logic still require architectural planning. Automation assists. It does not eliminate system design.

Is AI 100 percent trustworthy?

No software system offers complete certainty. Trust improves when:

- Constraints are understood

- Responsibilities are clear

- Exit options exist

Blind reliance increases risk.

Final Perspective

AI website builders are tools. They are neither inherently unreliable nor universally sufficient. They accelerate output. They simplify infrastructure. They reduce entry barriers.

Trust depends on alignment between:

- System requirements

- Platform constraints

- Growth expectations

Speed builds pages.

Architecture sustains outcomes.

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn