Quick Answer:

What vendors don’t tell you about AI automation is that success depends less on the tool and more on system design. Automation amplifies existing structure, data quality, and decision clarity. Hidden costs, ongoing human oversight, and post-launch stagnation are common, not exceptional, outcomes.

Introduction:

AI automation is usually presented as simple, fast, and cost-effective. Most vendors show clean demos, smooth dashboards, and instant results. That picture is not exactly false, but it is incomplete.

What vendors don’t tell you about AI automation is that it is not a secret feature or a hidden setting. It is how automation actually behaves once it leaves a demo environment and enters a real organization with messy data, unclear decisions, and changing goals.

This article looks at AI automation from a system behavior perspective. It does not review tools, compare platforms, or explain setup steps. It explains why automation often underperforms long after launch, even when the technology itself works as advertised.

This system-level view builds on our foundational analysis of how AI content automation actually works, moving from mechanics to organizational reality.

Why AI Automation Is Sold the Way It Is

Vendors are not lying when they sell AI automation. They are optimizing for what can be demonstrated and closed quickly.

Most AI automation sales focus on:

- Visual workflows that look complete

- Features that can be toggled on and off

- Speed of initial setup

- Short time to first output

- Clean example data

This makes sense from a sales perspective. A demo has to be clear, predictable, and impressive within minutes. It cannot include ambiguity, exceptions, or human disagreement.

What vendors don’t tell you about AI automation is that these demos represent the easiest possible version of the problem, not the typical one.

This sales optimization creates the conditions for platforms to oversell their capabilities—not through lies, but through selective presentation of best-case scenarios.

What AI Automation Systematically Ignores

In real environments, work is rarely clean or fully defined. Automation performs best when decisions are stable and rules are already agreed upon. Most organizations do not meet that condition.

Common realities that automation does not emphasize include

- Unclear ownership of decisions once automation runs

- Edge cases that do not fit predefined logic

- Conflicting incentives between teams

- Changes in goals after deployment

- Lack of feedback loops to correct output quality

These are not technical bugs. They are structural conditions. AI automation does not resolve ambiguity. It amplifies whatever structure already exists.

This amplification effect explains why AI content sites getting no-index after publishing often have technically functional setups—the automation simply scaled the structural gaps that were already present.

The Cost That Does Not Appear on Pricing Pages

AI automation is often marketed as low cost or cost saving. The software subscription may be reasonable. The real cost usually appears elsewhere.

Typical cost shifts include:

- Data preparation and cleanup

- Workflow redesign before automation works

- Ongoing monitoring of outputs

- Manual correction of edge cases

- Time spent explaining automation behavior to stakeholders

What vendors don’t tell you about AI automation is that cost does not disappear. It moves from software to operations. Automation changes the type of work people do. It does not remove work entirely.

These hidden costs are exactly why we developed a comprehensive question framework for evaluating AI builders before commitment

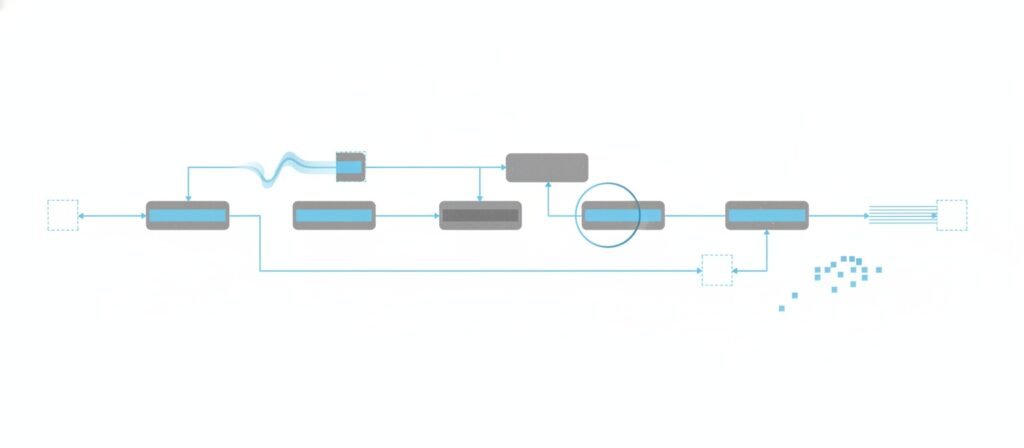

The Post-Launch Phase That Gets Very Little Attention

Most marketing stops at launch. In practice, launch is where automation problems begin to surface.

Common post-launch patterns include:

- Automation continues running without clear improvement

- Metrics flatten instead of compounding

- Teams trust outputs less over time

- Manual intervention slowly increases

- No clear signal shows when performance degrades

These failures are usually quiet. Nothing breaks. Nothing crashes. The system simply stops delivering meaningful value. This is why many automation projects are described as “working but not helping.”

This quiet decay is precisely what we observed when analyzing why AI blogs get stuck at zero impressions—the infrastructure works, but the outcomes never materialize.

Why Generic Automation Produces Generic Results

AI systems are trained to recognize patterns. When applied broadly, they tend to produce average outcomes.

This shows up as:

- Content that sounds correct but lacks specificity

- Decisions that follow common cases but miss context

- Outputs that look professional but do not differentiate

Automation scales patterns. It does not scale understanding. If the underlying process is generic, the output will be generic as well. This is especially visible in AI content automation, customer support automation, and sales workflows.

When this happens across a content portfolio, the result is often the structural convergence we documented in AI content cannibalization issues—many pages that look different but serve identical intent.

Vendor Promises Versus System Reality

Vendor Framing | System Reality |

End-to-end automation | Fragmented flows with manual patches |

Low upfront cost | Ongoing operational overhead |

Works out of the box | Works best on curated examples |

Scales easily | Scales noise faster than insight |

Reduces workload | Shifts workload type |

This gap between promise and reality directly impacts whether you should trust AI website builders for long-term projects. Trust requires alignment with system reality, not marketing narratives.

Why This Is a System Design Issue, Not a Tool Issue

Better tools will continue to appear. Models will improve. Interfaces will get cleaner. What vendors don’t tell you about AI automation is that better technology does not fix unclear systems.

Automation multiplies:

- Existing process quality

- Existing decision clarity

- Existing organizational alignment

If those are weak, automation exposes the weakness faster. This is why the same automation failures repeat across different vendors, platforms, and industries.

Understanding this distinction helps clarify when AI website builders actually make sense—and when they’re being applied to problems they can’t solve

Who This Article Is Not For

This article is not for readers looking for:

- AI automation tools or platforms

- Setup guides or workflows

- Pricing comparisons

- Buying recommendations

Those topics belong elsewhere. This article focuses on system behavior, not configuration.

Frequently Asked Questions:

What are the problems with AI automation?

The most common problems with AI automation are not technical errors. They include unclear decision ownership, poor data quality, lack of feedback loops, and automation being applied to unstable processes.

What is the 30 percent rule in AI?

The 30 percent rule is often used informally to suggest that AI handles repetitive work well, supports assisted decision making in some areas, and struggles with judgment, context, and accountability. The exact percentage varies, but the principle highlights limits rather than guarantees.

What cannot be automated by AI?

AI cannot reliably automate responsibility, intent, ethical judgment, or complex trade-offs between competing goals. These require human ownership even when AI assists.

What are the five biggest AI fails?

Common AI failures include automating unclear processes, trusting outputs without verification, ignoring post-launch monitoring, scaling before validating impact, and assuming tools fix organizational issues.

Closing Perspective

What vendors don’t tell you about AI automation is not hidden because it is malicious. It is hidden because it is difficult to sell.

Automation works best when systems are already designed to handle ambiguity, feedback, and change. Without that foundation, AI automation often delivers correct outputs that do not produce meaningful outcomes.

The operational costs and design dependencies described here are specific examples of the broader control problems we’ve mapped across AI builder ecosystems.

The most expensive part of AI automation is not the software. It is the assumptions built into the system before automation ever begins.

External References & Further Reading

The following resources provide additional context on AI automation, system design, and post-deployment behavior. They are not required to understand this article, but they support many of the structural patterns discussed above.

Platform & Evaluation Guidance

- Google Search Central

Creating helpful, reliable, people-first content

https://developers.google.com/search/docs/fundamentals/creating-helpful-content

System Design & Organizational Reality

- MIT Sloan Management Review

Why AI Projects Fail

https://sloanreview.mit.edu/article/why-ai-projects-fail/ - Harvard Business Review

Building the AI-Powered Organization

https://hbr.org/2019/07/building-the-ai-powered-organization

Technology & Post-Launch Behavior

- Thoughtworks

Technology Radar

https://www.thoughtworks.com/radar - IBM

AI Lifecycle and Governance

https://www.ibm.com/topics/ai-governance

Cost, Operations, and Implementation Friction

- McKinsey

Why Do Most Analytics Projects Fail?

https://www.mckinsey.com/capabilities/quantumblack/our-insights/why-do-most-analytics-projects-fail - Deloitte

From AI Experimentation to Value Creation

https://www.deloitte.com/global/en/insights/focus/cognitive-technologies/from-artificial-intelligence-to-real-value.html

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn