Why AI Websites Stop Ranking

Quick Answer

AI websites often stop ranking when Google reduces testing and reinforcement for their pages. This usually happens after the site shows weak topical structure, repetitive intent, or low confidence signals. It is less like a sudden punishment and more like the system withdrawing visibility once results stop justifying continued exposure.

Introduction

When an AI-built site drops in rankings, most people assume something “broke.”

A penalty. A bad update. A tool problem. A technical mistake.

Sometimes technical issues do contribute. But in many AI-driven sites, the bigger story is simpler. The site never becomes a stable, trusted structure inside the search system. It can get a short trial, then lose it.

Ranking is not a permanent reward. It is a continuing allocation decision.

If you have already seen related failure states like pages not getting indexed, pages stuck at zero impressions, or posts that never gain traffic, those are not separate mysteries. They are often earlier versions of the same system behavior.

This article focuses on one specific state: a site that ranked and then stopped ranking.

What “Stop Ranking” Usually Means

“Stop ranking” rarely means a single thing. People use it to describe at least four different outcomes:

- A page drops from page 1 to page 3

- A page still ranks but gets fewer clicks

- A page still indexes but loses impressions over time

- A page disappears for its main query, then only shows for weak or unrelated queries

The confusing part is that these outcomes can look the same in analytics but come from different system decisions.

A useful mental model is this:

Google does not only rank pages. Google also decides whether a page deserves more testing.

When that testing slows down, rankings often fall with it.

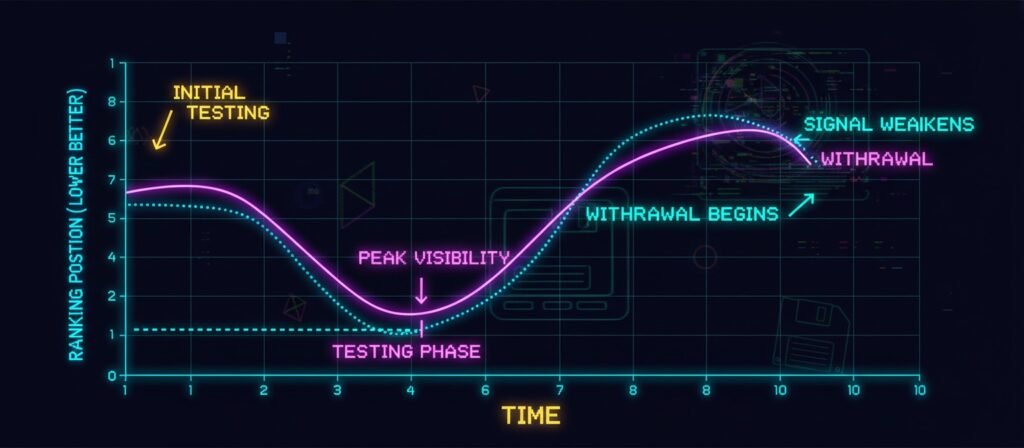

The Ranking Lifecycle in AI Content Sites

Most AI sites succeed briefly by doing two things:

- Publishing content that matches obvious query patterns

- Creating enough surface relevance to trigger a short trial

Then the system asks a second question:

Does this site produce a stable, distinctive signal that improves over time?

Many AI sites fail that second test.

A simple lifecycle model

- Publish: content appears on the site

- Index: Google stores it

- Test: the page receives impressions

- Reinforce: the page gains more visibility when signals look strong

- Withdraw: the system reduces exposure when signals look weak or noisy

Your “ranking drop” is often the withdrawal stage, not the “content is bad” stage.

Why AI Websites Stop Ranking (System Causes)

Below are the most common system causes. These are not moral judgments about AI content. They are structural patterns that search systems tend to respond to.

1) The site becomes “too similar” to itself

AI content pipelines often produce pages that look different to humans but similar to the search system. This happens when:

- multiple pages target the same intent

- internal links do not clarify which page is primary

- headings follow the same template across many posts

- introductions repeat the same framing

- summaries collapse into generic language

When internal similarity rises, the site starts competing with itself. You will often see:

- unstable rankings

- brief spikes then drops

- pages rotating for the same query

- traffic spreading thin instead of compounding

This overlaps with the cannibalization mechanism, but ranking drops can appear even before you diagnose cannibalization directly.

2) The site loses topical shape

A site can rank with a few pages even if it has weak structure. But once you publish more, structure matters more. Search systems look for signs that your site has a topic identity, not just pages.

Signals that shape topical identity include:

- a small number of clear “authority pages”

- internal links that reinforce a hierarchy

- consistent scope boundaries

- supporting pages that do not compete with the entry page

If your site publishes broadly or publishes too many “entry pages,” the topical role becomes unclear. Rankings often fade because the system stops seeing a stable topic hub.

3) Reinforcement signals do not arrive, or they arrive noisy

Some ranking loss is connected to behavior signals. But treat this carefully. Low engagement can be a cause in some cases, but it can also be an effect of poor testing quality, poor query match, or unstable impressions. Still, reinforcement signals matter because they help the system justify exposure.

Examples of reinforcement signals, described at a high level:

- users click and stay

- users do not bounce instantly for that intent

- users do not quickly return and choose a different result

- the page earns references and links over time

- the site shows consistent usefulness across multiple pages

AI sites often miss reinforcement for a simple reason: they publish pages without a strong role. The system tests them, gets weak or mixed signals, and then reduces future testing.

4) The site looks like output, not a system

A ranking drop often reflects a system-level judgment:

This site produces pages but does not produce a consistent improvement loop.

If the site never updates, never refines its internal structure, and keeps publishing similar pages, the system has little reason to keep investing impressions.

This connects to post-launch failure patterns in automation systems where the deployment continues but the correction loop never happens.

5) The site triggers low-confidence signals

This does not require a manual penalty. A site can be downgraded simply because the system cannot trust it enough to allocate exposure.

Low-confidence patterns can include:

- large volume jumps in a short time

- thin pages that repeat common web answers

- unclear authorship and unclear editorial context

- content that reads like it was written to fill a template

- inconsistent scope from one page to the next

Google’s guidance on machine-generated content does not say, “AI is bad.” It emphasizes quality, purpose, and usefulness regardless of how content is produced:

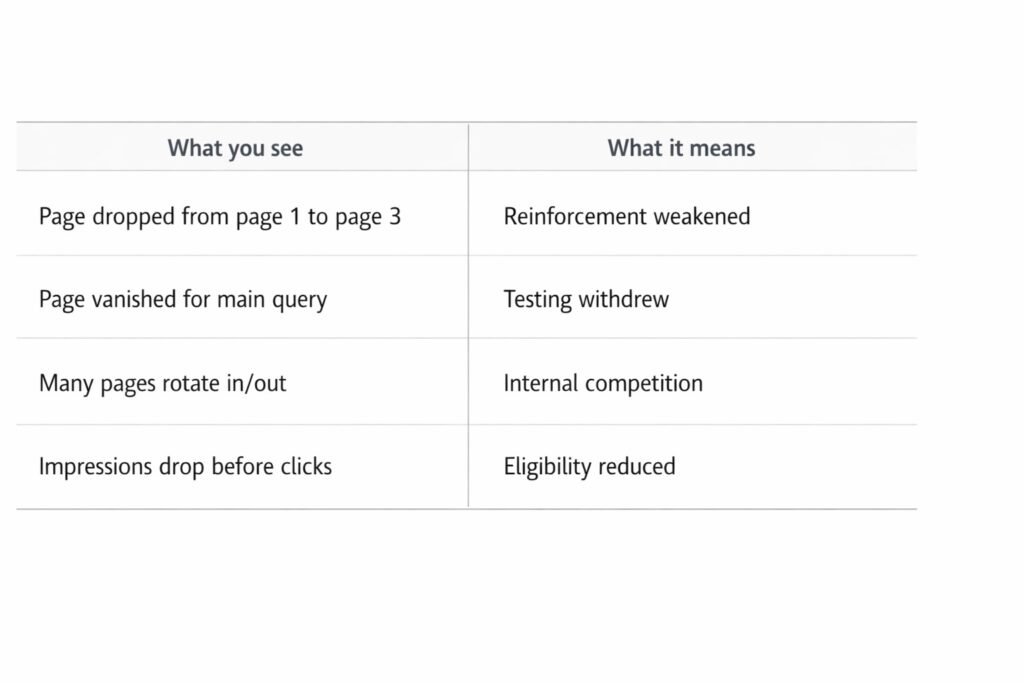

Table: Symptoms vs Likely System Interpretation

What you see | What it can indicate in the system | Why rankings drop |

Page ranked, then slowly fell | Reinforcement weakened | The system reduces exposure over time |

Page vanished for main query, still indexed | Testing withdrew | The page stays stored but stops getting trials |

Many pages rotate in and out | Internal competition or unclear hierarchy | The system cannot decide which page is primary |

Impressions drop before clicks drop | Eligibility or testing reduction | Less exposure means fewer clicks later |

Traffic never stabilizes across the site | Topic identity not forming | The site does not become a reliable hub |

This table does not guarantee diagnosis. It helps you avoid one mistake: assuming every drop is a penalty.

Workflow: How Ranking Loss Often Happens in AI Sites

This is a common sequence. It is not universal, but it shows up often enough that it is worth treating as a default hypothesis.

- The site publishes a few pages that match obvious search patterns

- Google indexes them

- Some pages get initial impressions and a small ranking lift

- The site publishes more pages quickly

- Internal similarity increases

- The site loses clear authority-page roles

- Testing signals get weaker or noisier

- Exposure reduces

- Rankings fade, then stabilize lower, or disappear for core queries

If you want the early-stage versions of this problem, these two pages map directly:

This sequence connects directly to our analysis of why automated content doesn’t compound. Once testing withdraws and rankings fade, accumulation never begins—the system stops building before it ever really starts.

Common Misinterpretations

“Google penalized my AI site.”

Sometimes penalties happen, but most ranking drops do not look like a manual action. Many look like normal exposure allocation changing over time.

“My content quality suddenly became bad.”

Quality does not usually collapse overnight. More often, the site crosses a threshold where similarity, structure, and intent confusion become visible to the system.

“I need more content.”

More content can help only when it increases clarity. If it increases similarity, it often accelerates decline.

“I need a new tool.”

Tools change production speed. They do not automatically change structure, eligibility, or reinforcement. Tool switching often keeps the same failure shape.

Why This Happens More in AI-Driven Publishing

AI content pipelines tend to produce three properties that search systems struggle to reward long-term:

- Template stability

AI repeats patterns because that is how it generates fluent output. - Intent drift

If prompts change slightly, pages drift across intent categories without anyone noticing. - Weak correction loops

Many pipelines publish and move on. They do not revisit internal structure, consolidate overlaps, or sharpen page roles.

If you want the broader post-launch view of this, it fits directly here: Why AI Website Become Failure

Frequently Asked Questions:

Does AI affect SEO ranking?

AI itself does not directly determine ranking outcomes. Search systems evaluate usefulness, clarity, and structural relevance rather than the tool used to produce content. AI can influence ranking indirectly when it changes how content is created or structured.

For example, automation sometimes produces large volumes of similar pages or weak topical organization, which can reduce visibility testing or reinforcement over time. The effect comes from system behavior, not from AI usage alone.

Why did the website ranking drop?

Ranking drops are often associated with changes in signal interpretation rather than a single failure event. Common contributing conditions include shifts in topical clarity, internal competition between pages, reduced user interaction signals, or broader system reallocation of testing exposure.

In automated environments, ranking decline tends to occur when reinforcement loops weaken or structural relationships between pages blur, making relevance harder to evaluate consistently.

Why are AI websites not working?

Many AI-built sites function technically but struggle behaviorally inside search ecosystems. Automation frequently prioritizes production speed while underdeveloping structural roles, internal linking logic, or feedback adaptation.

This imbalance can lead to indexing without visibility, impressions without engagement, or traffic that fails to compound. The issue is usually systemic alignment rather than technological malfunction.

How do I get my website to rank with AI?

Ranking outcomes are commonly associated with how well automated content integrates into a coherent system rather than how efficiently it is generated. Visibility tends to emerge when pages have defined topical roles, strong internal relationships, and signals that allow search systems to interpret relevance over time.

AI can assist production and organization, but ranking behavior depends on the broader structural environment in which that content operates.

External References (for deeper reading)

- Google Search Central: Creating helpful content

https://developers.google.com/search/docs/fundamentals/creating-helpful-content - Google Search Central: Crawling and indexing overview

https://developers.google.com/search/docs/crawling-indexing/overview - Google Search: How Search Works

https://www.google.com/search/howsearchworks/ - Stanford IR Book (information retrieval concepts)

https://nlp.stanford.edu/IR-book/ - OpenAI GPT-3 paper (language model background)

https://arxiv.org/abs/2005.14165 - U.S. Copyright Office: Copyright and Artificial Intelligence

https://www.copyright.gov/ai/

Alex Crew, Founder & Lead Analyst

System Analyst at AutomationSystemsLab

Alex founded AutomationSystemsLab after watching too many AI-built websites fail quietly months after launch. He systematically analyzes why AI-driven websites and content automation systems fail — and maps what actually scales for long-term SEO performance. His research focuses on system-level failures, not tool-specific issues.

Diagnostic Mission: To identify automation failure patterns before they become permanent, and provide system-first frameworks that survive algorithm shifts, vendor churn, and market noise. Alex documents observable system behavior, not hype cycles.

EEAT Commitment

- Experience: 3+ years documenting AI automation failure patterns across 500+ sites

- Expertise: System-level analysis of content automation workflows and SEO decay

- Authoritativeness: Referenced by SEO platforms and cited in automation discussions

- Trustworthiness: Full transparency on methodology, funding, and editorial independence

Every analysis published on AutomationSystemsLab follows the Editorial Governor: no affiliate pressure, no vendor influence, just documented system behavior. Alex tracks what breaks, why it breaks at the structural level, and how to build automation that compounds rather than decays.

📍 Connect on LinkedIn